Divide and Conquer for TRACTABLE Computations

September 11, 2009

Nanotechnology holds a wealth of potential for major advances in a broad range of energy applications, but developing nanosystems is quite challenging because simulations necessary to understand structures at the nanoscale require tremendous amounts of computational resources. A new algorithm promises to unleash the power of nanotechnology by enabling electronic structure calculations with hundreds of thousands of atoms.

Nanostructures—tiny materials 100,000 times finer than a human hair—may hold the key to energy independence. A fundamental understanding of nanostructure behaviors and properties could provide solutions for curbing our dependence on petroleum, coal, and other fossil fuels by providing new and more efficient technologies for generating, storing, and transmitting electricity. For example, nanostructure systems are cheaper to produce than the crystal thin films used in current solar cell designs for harvesting solar energy, and offer the same material purity. Nanocatalysts may be the key to more cost-efficient hydrogen fuel cells. Lighting, batteries, and electrical grids may all be revolutionized by nanotechnology.

Because nanomaterials are so tiny and have such a large surface-to-volume ratio, they behave very differently from bulk forms of the same materials, and so numerical simulations are often necessary to understand their behavior. Scientists who create these numerical simulations are interested mainly in the location and energy level of electrons in the system—information that determines a material’s properties. But unlike bulk systems, nanostructures cannot be represented by just a few atoms. They are coordinated systems, and any attempt to understand the materials’ properties must simulate each system as a whole.

A reliable method based on density functional theory (DFT) allows physicists to simulate the electronic properties of materials. But DFT calculations are time-consuming, and any system with more than 1,000 atoms quickly overwhelms computing resources, because the computational cost of the conventional DFT method scales as the third power of the size of the system. Thus, when the size of a nanostructure increases 10 times, computing power must increase 1,000 times. The nanosystems of interest to energy researchers often contain tens of thousands of atoms. So one of the keys to unleashing the power of nanotechnology is to find a way of retaining DFT’s accuracy while performing calculations with tens of thousands of atoms.

With petascale computers now providing more than 100,000 processor cores, the simulation of complete nanosystems should be possible if we can find a way to make the computational cost scale linearly to the size of nanostructure systems. Researchers at Lawrence Berkeley National Laboratory (LBNL) have demonstrated a way to accomplish this using a divide-and-conquer algorithm. These researchers include members of the Scalable Methods for Electronic Excitation and Optical Responses of Nanostructures project (jointly sponsored by the DOE Office of Basic Energy Sciences and Office of Advanced Scientific Computing Research) and the SciDAC Performance Engineering Research Institute (PERI). In November 2008, this research was honored with the Association for Computing Machinery (ACM) Gordon Bell Prize for Algorithm Innovation (sidebar “LS3DF Wins Gordon Bell Prize for Algorithm Innovation”).

Figure 1.Developers of LS3DF and winners of the ACM Gordon Bell Prize, left to right: Zhengji Zhao, Lin-Wang Wang, Erich Strohmaier, Juan Meza, David Bailey, and Hongzhang Shan. Not shown: Byounghak Lee.

The Opportunity and Challenge of Petascale and Exascale

Today’s petascale computers, and the exascale computers already on the drawing boards, tend to be heterogeneous, with stacks, nodes, and cores (processors) at three different levels. These hardware developments pose daunting challenges for software developers. How to effectively use hundreds of thousands to millions of processors is not a trivial task. Old programs which perform well on thousands of processors could fail on millions of processors. More fundamentally, with the increasing number of processors, the best simulation methods might change as well. Here, “simulation methods” refers not just to different algorithms used to calculate the same mathematical formula; rather it refers to different mathematical formulas and approximations to the physical world. Thus, the computation can change at a fundamental level.

With a large number of processors, in general, the divide-and-conquer class of algorithms should be a good computational strategy. The divide-and-conquer approach is conceptually simple (although not always easy to implement): just cut the system into many small pieces, solve the pieces separately, and then put them together to get the result of the original system. This strategy takes advantage of massive parallelization and avoids heavy communication between far away processors. It is also well suited to multiscale simulation, where microscopic details are simulated locally by local processors, only to be integrated at a higher level with the results from other processors. The divide-and-conquer scheme also provides a natural mapping between the physical object to be simulated and the computer processors in a communication network.

The challenge of the divide-and-conquer method is how to patch the divided parts together seamlessly to get the results of the original system without introducing any spurious artifacts resulting from the computational method itself. Here we will illustrate one such scheme that patches up the divided pieces and cancels out the artificial boundary effects. This method enables linear scaling of electronic structure calculations, yielding essentially the same result as the traditional DFT method, but potentially running thousands of times faster. It also allows the use of petascale computers with high efficiency, with no indication of any slowdown as parallelization increases.

Nanoscience Simulations

Nanosystems are interesting due to their peculiar position at the junction between bulk and molecular systems. In some aspects, a nanosystem, such as a nanocrystal (a small piece of crystal structure), is like its bulk counterpart, with similar internal atomic arrangements, mechanical, and even electrical properties. On the other hand, some of its other features resemble that of a molecule. For example, both a nanosystem and a molecule have discrete rather than continuous energy levels. The large surface-to-volume ratio of a nanostructure makes it useful for many potential applications, especially for catalysis. The small size of the system also makes it possible for defects to migrate to the surface. As a result, at the interior, some of the cheaply synthesized nanocrystals can be among the purest materials in the world. The small size of a nanostructure also confines where the electrons can move, and this has a profound influence on its electrical conductivity. This feature can be exploited to design new electronic devices.

Another phenomenon unique to nanostructures is the so-called quantum confinement effect. As we know, the optical and electronic properties of a material are determined by its electron energy levels. It turns out that one can tune the energy levels of a nanostructure by simply changing its size. As a result, we can change the color of a quantum dot (a spherical nanocrystal) from red to blue by reducing its size. This change of energy level happens because the size of the quantum dot is comparable with the quantum mechanical wavelength of the electron.

To understand all the above behaviors of nanosystems, numerical simulation is essential. For an infinitely periodic bulk crystal, there are analytical tools like the Bloch wave function band structure theory and Boltzmann equation to help us understand many of its properties. For a small molecule, there could be energy level models, coupling and repulsions between them to help us understand what is going on when the geometry of the molecule changes. Unfortunately, for nanosystems, many of these analytical tools are no longer applicable. This makes the numerical simulation even more important.

Ironically, those tiny nanosystems are often too large for atomistic simulations. Molecules often contain only tens of atoms, thus the calculation is easy. For infinite bulk crystals, due to their periodicity, one can calculate just one unit cell which also contains only a few atoms, and so it is not difficult. But a nanosystem often contains tens of thousands of atoms, and there is no obvious way to reduce them to a smaller unit for calculation. For example, a 3.9 nm diameter cadmium selenide quantum dot contains about 1,000 atoms, while an 8.5 nm dot contains 10,000 atoms. In experiments, a size of 8–20 nm is the norm. This poses a daunting challenge for numerical simulation, even with modern supercomputers.

One reason for this challenge is the unfavorable scaling of the current algorithm used in the simulation. The computational cost of the conventional algorithm scales as the third power of the number of the atoms. Thus, it will be 1,000 times more expensive to calculate an 8.5 nm size quantum dot than a 3.9 nm size quantum dot. This is almost like a brick wall which stops the advance of the amenable size of the system. Currently, with thousands of computer processors, we can calculate a quantum dot at about 4 nm. But this is often not the experimental quantum dot size, hence direct comparison with experiment might not be possible.

There are other more approximate methods for nanostructure calculations. One such method is called effective mass theory (or k.p theory, with its name derived from its formula). This theory ignores the atomic feature of the electron, paying attention only to its overall behavior described by an envelope function. This theory was developed in the 1960s, in the heyday of bulk semiconductor research. It has been used successfully to study problems like impurity binding and exciton energy in bulk semiconductors. Despite its tremendous success in nanostructure calculations, there are fundamental drawbacks in the effective mass method. First, the predicted energy levels and their quantum confinement effects can be off by a factor of two. Thus, it cannot provide accurate quantitative prediction. Second, more seriously, by smoothing out the atomic features of the electron wave functions, it cannot be used to study important atomic-scale effects in nanostructures, such as the surface states, impurities, surface atomic reconstruction, and symmetries based on atomic arrangements. Nanostructures are useful partly because they couple the bulk-like properties with these atomic-scale features.

Another widely used method for nanostructure calculation is called the tight-binding model. In contrast with the effective mass model, the tight-binding model keeps the atomic feature of the electron wave functions, but perhaps focuses too much on it. It represents each atom with its position and a few basis functions. Due to its severe approximation for the electron wave functions, its reliability can be problematic. Besides, in the empirical tight-binding model, there is no charge self-consistency, which could be important in many applications.

Overall, it is clear that accurate ab initio self-consistent quantum mechanical calculations for nanosystems with tens of thousands of atoms will be extremely useful. Here by ab initio, we mean the calculation is carried out without experimental fitting parameters, except the knowledge of the geometry of the nanosystem to be studied and the type of elements in the system.

Why are Electronic Structure Calculations Difficult?

Why are quantum mechanical calculations so difficult? To explain this, we need to go back to the origin of quantum mechanics. In quantum mechanics, the movements of the electrons are described by a many-body wave function: Ψ(r1,r2,…,rN). The equation (Schrödinger’s equation) which governs the change of this wave function is quite simple, and can be written down in a single line. Schrödinger’s equation has been known since the 1930s, when quantum mechanics was invented. This prompted Paul A. M. Dirac’s 1929 claim that the “laws necessary for mathematical treatment of … the whole of chemistry are thus completely known, and the difficulty is only that the exact application of these laws leads to equations much too complicated to be soluble.” Yes, indeed, “too complicated to be soluble;” here lies the whole challenge of computational chemistry and material science.

Unlike the classical Newtonian description of N particles, where the trajectory of one particle can be described as a function of time and described independently from other particles’ trajectories, in quantum mechanics the wave function of one particle is intertwined with the wave functions of other particles. As a result, we cannot describe the wave functions of the individual particles and put them together to get the wave function of the whole system. This inseparability is called the correlation effect. As a result, to solve the problem exactly, we need to solve the N-dimensional wave function Ψ(r1,r2,…,rN) head on; here N is the number of electrons (in counting the dimensions, we have counted each ri as one instead of three dimensions).

It is daunting just to describe this wave function numerically. Let us say the whole system resides inside a box which needs M spatial grid points to describe each coordinate, ri. Then in total, there will be MN grid points to describe the N-dimensional wave function Ψ. That means MN numerical coefficients. Since the spatial expanse of the system is also proportional to the number of atoms (hence the number of electrons, N) in the system, M is proportional to N. As a result, the number of coefficients should be proportional to NN. Such exponential behavior is something our current computers cannot handle. Perhaps proposed quantum computers can be used to solve such quantum mechanics problems directly in the future, but not current conventional computers, even at the exascale or beyond.

In order to make the problem tractable, what we need is to make the many-body wave functions separable, allowing the problem to scale algebraically, not exponentially. This can be done with various types of approximations. The simplest is the Hartree–Fock approximation, where the many-body wave function Ψ(r1,r2,…,rN) is represented as a product of N single particle wave functions ψ1(r1)Ψ2(r2)…ψN(rN) (plus some symmetry operations). Unfortunately, the Hartree–Fock method ignores the correlation effect, and often leads to large errors. There are many post-Hartree–Fock quantum chemistry methods, including MP2, the coupled cluster method, and the configuration interaction method. All these methods have high order scaling, from N4 to N7, and are thus only suitable for small molecule studies.

In computational material science, the breakthrough came with density functional theory (DFT). As in the Hartree–Fock method, in DFT, N single-particle wave functions {Ψi(ri)} are used to describe the system. Now, instead of ignoring the correlation energy, it (together with the exchange energy) is approximated by a functional of the total charge density. This enormously improves the accuracy of DFT and makes it the method of choice for many material science and chemical science simulations. Walter Kohn was awarded the Nobel Prize in 1998 for his contribution to the development of density functional theory.

Linear Scaling Electronic Structure Calculations

But even with DFT, the computation of nanosystems is hard. To describe the set of single-particle wave functions {Ψi(ri)}, i = 1,N, we need M × N ∝ N2 coefficients. This becomes the memory requirement. Furthermore, these N wave functions need to be orthogonal to each other. To enforce such an orthogonal relationship, one needs to carry out M × N2 ∝ N3 operations. This causes the computational cost to grow as the third power of the number of atoms in the system. This is, of course, much better than exponential scaling, but as discussed above, it is still not enough to calculate nanosystems with tens of thousands of atoms. To calculate such systems, a linear scaling method is necessary.

There have been many developments in linear scaling electronic structure calculation methods. There are three popular approaches. The first one is a localized orbital approach, where each wave function Ψi(ri) (orbital, not the eigen wave function here) is only described within a small fixed region in the real space, instead of the whole volume. There are many variations of this approach, and there are also some fundamental problems to be overcome. One of the most serious of these is the convergence problem in the iterative solution of the orbital Ψi(ri). Sometimes it suffers from an energy terrain with many local minima. Such local minima are a mathematical nightmare when we want to minimize the energy (in order to get the final converged results for both the energy and the wave functions). Although there are empirical ways to avoid the local minima in some cases, the root of the problem is not well understood, and it is still an unsolved problem. The second linear scaling method is the so-called truncated density matrix method. One can use the above wave functions to construct a density matrix:

ρ(r1, r2) = Σi=1,N ψi(r1)ψ (r2)

The density matrix contains the same information as the wave functions, so we can just work on the matrix to solve the quantum mechanical problem. In the truncated density matrix method, r2 inside r(r1,r2) is truncated within a small region close to r1. This reduces the number of coefficients needed to describe r(r1,r2), and the computational cost also scales linearly with the size of the system. Furthermore this approach does not suffer from the convergence problem that exists in the localized orbital method. The drawback here is that, in a real space grid description, for a given r1, the number of grid points for r2 inside the truncated region is, although fixed (not scaling with the size of the system), still large. There could be tens of thousands of grid points for r2. This will cause a big prefactor for the linear scaling method. Thus, it is usually impractical to use this scheme with a real space grid. Instead, the localized basis sets (like the atomic orbital basis set, and Gaussian basis set) are used in this approach. Since such basis sets are often used in quantum chemistry calculations, this method has become popular in the quantum chemistry community. But it does not have the benefit of a systematic improvement of the numerical basis set, as in the real space grid or plane wave basis methods.

The third linear scaling approach is the divide-and-conquer approach. As stated above, this approach is conceptually simple: just cut the system into many small pieces, solve the pieces separately, and then put them together to get the result of the original system. The beauty of this idea is not only that it makes the computational cost scale linearly (proportional to the number of the pieces, hence the size of the system), but it also makes it extremely easy for parallelization (each piece can be solved separately by different processors). The second point is not trivial, since parallelizations based on the localized orbital method and the truncated density matrix method are not so straightforward. Although there are parallel codes available for those methods, there is no code which can scale to thousands of processors.

But the devil is in the details. When a vase has been smashed into thousands of pieces, how to glue them back seamlessly into its original form is not an easy task. Likewise, reassembling a divided-and-conquered system without distorting the results is tricky. One idea is to use overlapping pieces, then only use the center part of each piece (since the boundary of the piece is obviously artificial and has nothing to do with the original system). More technically, a spatial partition function is used to extract the center part of each piece. Unfortunately, the use of such partition functions has caused some technical difficulties, including uncertainty in the kinetic energy expression and local charge conservation.

The Linear Scaling Three-Dimensional Fragment (LS3DF) Method

Recently, a new scheme has been proposed, where all the charge within an individual piece is used, but overlapping pieces with different sizes are calculated. By carefully adding and subtracting contributions from each piece, the artificial boundary effects can be cancelled out magically. The final result is so accurate, it is essentially the same as the direct O(N3) scaling DFT calculations. Yet, due to its linear scaling, the new method could be one thousand times faster than the original method. Furthermore, it can be efficiently run on hundreds of thousands of processors, without any indication of slowing down in efficient parallelization. This method is called the linear scaling three-dimensional fragment (LS3DF) method (sidebar “LS3DF Code Development” p26). It is the first linear scaling electronic structure calculation method which can be run on so many processors. Due to its novel algorithm, parallelization efficiency, and overall speed, it was awarded the 2008 Gordon Bell Prize for Algorithm Innovation (sidebar “LS3DF Wins Gordon Bell Prize for Algorithm Innovation” p23). Using this code, it is now possible to study a system with tens of thousands of atoms within one or two hours.

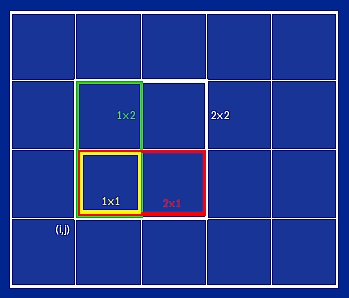

Figure 4. A two-dimensional schematic illustrating how LS3DF divides the space into grids and fragments of different sizes.

The division of space (hence the atoms in it) of the LS3DF method is shown in figure 4. For simplicity, we have used a two-dimensional system (instead of three dimensions) to illustrate the point, but the concept is the same. If the whole system is placed inside a box, one can first divide the box by an m1 × m2 two-dimensional grid. Now, at each grid point (i,j), one can define four fragments (pieces) with all their lower-left corners located at (i,j). In terms of the grid unit, the sizes of these four fragments are: 2×2, 2×1, 1×2, and 1×1. Let us denote the charge density of these fragments as ρ2×2(i,j), ρ2×1(i,j), ρ1×2(i,j), ρ1×1(i,j). If all these fragment charges have been calculated (such as by different computer processors), then the total charge of the system can be added up as: ρtot = Σi,j [ρ2×2(i,j)– ρ2×1(i,j) – ρ1×2(i,j) + ρ1×1(i,j)]. One can convince oneself by counting different fragments that all the edges and corners of the fragments in the summation will be cancelled out from each other. One simple bit of algebra can make this clear. In terms of area, at each(i,j) grid point, we have 2×2 – 2×1 – 1×2 + 1 = 1; that is exactly the area needed to fill in the whole space. On the other hand, in terms of edges (fragment boundary lines), we have 8 – 6 – 6 + 4 = 0, and in terms of corners, we have 4 – 4 – 4 + 4 = 0. Thus, they all cancel out. This scheme also works for three-dimensional systems, where at each grid point(i,j,k) there are eight fragments with their sizes (and the +/- signs in the summation) as: 2×2×2(+), 2×2×1(-), 2×1×2(-), 1×2×2(-), 2×1×1(+), 1×2×1(+), 1×1×2(+), and 1×1×1(-) respectively.

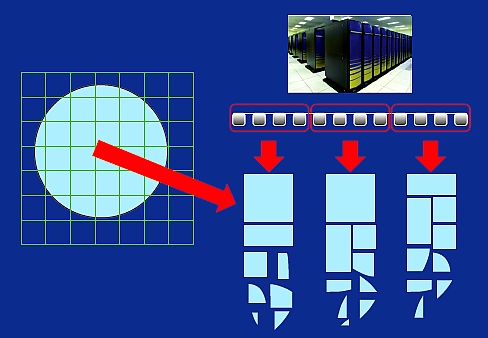

Note that only the fragment charge densities are patched together, not the fragment wave functions. The global charge is needed to solve the Poisson equation of the whole system for the long-range Coulomb interactions. This global charge will also be used to calculate the exchange-correlation energy under the density functional formula (such as the local density approximation, LDA). On the other hand, the fragment wave functions {ψF,i} are only used to generate the fragment charge density and the fragment kinetic energy (which will also be summed up using the same formula with positive and negative signs for different sized fragments). Besides the kinetic energy, all the other energy terms in the LDA energy expression will be calculated by the total charge density ρtot(r). Since calculating {ψF,i} is the most time-consuming part, and the total computational cost is proportional to the number of fragments, this makes the method scale linearly with the size of the whole system. It also makes the whole calculation easily parallelizable. A scheme for parallelization is shown in figure 5. Typically the total number of processors is divided into many small groups; each group (with 32–128 processors) solves an assigned number of fragments, one after another.

Figure 5. A schematic of the LS3DF parallelization method and distribution of fragments among processor groups.

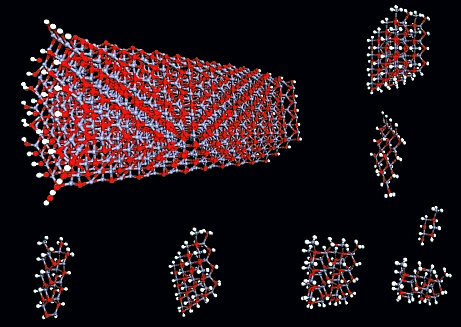

Figure 6. A ZnO quantum rod and some of its fragments. Red dots are oxygen atoms, blue dots are zinc, and white dots are hydrogen.

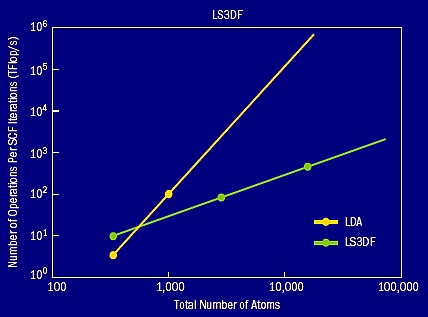

The accuracy of the LS3DF method depends on the size of the fragment: the larger the size, the better the accuracy when compared with the original direct DFT calculation. So, for a given accuracy, we need to use a fixed fragment size. A typical size for the smallest 1×1×1 fragment contains eight atoms, and the largest 2×2×2 fragment contains 64 atoms. In figure 6 (p29), an example zinc oxide (ZnO) quantum rod is shown together with some of its fragments. Using such fragments, the LS3DF total energy is only a few MeV per atom different from the direct whole system DFT calculations. This error is smaller than many other numerical errors in a typical DFT calculation, such as the plane wave cutoff error and the pseudopotential error. Indeed, for most practical purposes, the LS3DF method can be considered the same as the direct DFT method. However, in terms of its speed, due to the linear scaling of the LS3DF method, it can be thousands of times faster. Figure 7 shows the computational cost of the LS3DF method compared with the original LDA method. The crossover happens around 500 atoms. This crossover is similar to the crossover reported for other linear scaling methods like the localized orbital method. Here plane waves are used as the basis set to describe the fragment wave functions, and norm conserving pseudopotentials are used to describe the nuclei potentials. The plane wave method is the most commonly used method in material science simulation.

Figure 7. The computational costs of the LS3DF and direct LDA methods. The crossover happens around 500 atoms.

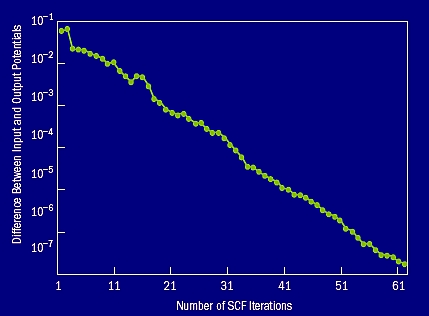

Figure 8. The self-consistent convergence |Vin–Vout| as a function of the number of iterations. This is for a 2,000 atom ZnTeO alloy system with 6% O.

Figure 8 shows the convergence of the LS3DF method as a function of the self-consistent iterations. In a variational formalism like the LS3DF, the total energy minimization is achieved by self-consistent iterations, where a total system input potential is used to generate an output potential through the LS3DF formalism. When the input and output potential become the same, we say that the solution is self-consistent. Figure 8 shows that in the LS3DF method, the difference between input and output potential drops exponentially as the iteration progresses. This is very good convergence behavior; it clearly does not suffer from the convergence problem seen in some other O(N) methods like the localized orbital method.

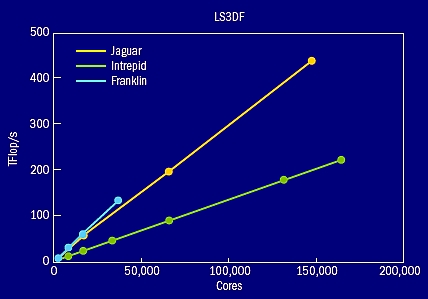

Figure 9. The parallelization scaling curves for LS3DF calculations on Jaguar, Intrepid, and Franklin computers. This is a weak scaling case, which means as the number of cores increases, the number of atoms in the solved system also increases. The biggest system contains 36,864 atoms. Good scaling curves are also obtained for strong scaling cases.

The biggest advantage of LS3DF is its parallelization (figure 9). The method scales all the way to 150,000 processors on one of the largest supercomputers in the world, the Jaguar Cray XT5 machine at the National Center for Computational Science (NCCS) at Oak Ridge National Laboratory. It reached a speed of 442 teraflop/s, which is 33% of the theoretical speed of the whole machine. Similar good performances (40% efficiency) were achieved on the 160,000-processor Intrepid IBM Blue Gene/P machine at the Argonne Leadership Computing Facility (ALCF) at Argonne National Laboratory and the 38,640-processor Franklin Cray XT4 machine at the National Energy Research Scientific Computing Center (NERSC) at LBNL. The code was initially developed and tested (sidebar “LS3DF Code Development” p26) on the NERSC machines (Cray XT4, IBM SP3, and IBM SP4). The relative ease of porting the LS3DF code to the different machines and reaching high efficiency without much tuning indicates the stability and robustness of the code and the formalism itself.

Applications of LS3DF

There are many material science and nanoscience problems involving thousands to tens of thousands of atoms that can be accurately simulated only by ab initio self-consistent methods. These include nanostructures such as quantum dots and wires, core/shell nanostructures, as well as more conventional systems such as semiconductor alloys. The LS3DF method covers a current gap in the DOE’s materials science code portfolio for such large-scale ab initio simulations. Two examples of LS3DF applications are described in sidebars: cadmium selenide (CdSe) quantum rods (sidebar “Dipole Moments in CdSe Quantum Rods” p27), and zinc tellurite oxide (ZnTeO) alloy (sidebar “ZnTeO Alloy for Solar Cell Applications” p24).

With the LS3DF code, a calculation like the one shown in figure 3 (p27) can be finished in one or two hours. This makes a huge difference in terms of actual research progress. Only when fast calculations like this become possible can scientists really concentrate on the physics of the problem. In most material science studies, tens or even hundreds of calculations need to be carried out, often by changing some aspects of the system depending on the results of previous calculations. This is a very interactive process between man and machine. Even with supercomputers, this aspect of the research has never changed. Supercomputers and algorithms like LS3DF just make the amenable systems larger or more complicated, but the tolerable turnaround time for the scientists to get the result has remained the same. In industry, people often talk about the tolerable time as a “coffee break.” In academia, the tolerable time ranges from a few hours to a few days.

Summary

Petascale computing might bring a paradigm change for the corresponding algorithms. The best algorithms for a given range of problems on a teraflop/s supercomputer might not be the best on a petaflop/s supercomputer. When the speed increases from teraflop/s to petaflop/s, the type of problems that scientists want to use the computer to solve might also change, along with the corresponding algorithms. This calls for more research in algorithm development along with hardware improvements.

For next-generation computers with possibly millions of processors, the divide-and-conquer class of algorithms looks like a good strategy. With the divide-and-conquer method, heavy communications are restricted to local processors, and so massive parallelization becomes possible. In addition, nature provides many applications that are compatible with divide-and-conquer methods, because most interactions and fundamental laws are local in nature.

The challenge for the divide-and-conquer method is how to combine the divided pieces together seamlessly to get the solution of the original problem. One such scheme is illustrated by the LS3DF method, in which the artificial boundaries between different fragments caused by the subdivision in the divide-and-conquer scheme are cancelled out. This method enables calculations to run thousands of times faster, but with results essentially the same as those produced by the original direct DFT method. We expect many more applications of the LS3DF method in nanoscience simulations.

Contributors

Lin-Wang Wang and John Hules (science writer and editor), LBNL

Further Reading

L. W. Wang et al. 2008. Linear-scaling 3D fragment method for large-scale electronic structure calculations. LBNL technical report LBNL-603E; Gordon Bell paper, Proceeding of SC08. http://repositories.cdlib.org/lbnl/LBNL-603E/

L.W. Wang, Z. Zhao, and J. Meza. 2008. A linear-scaling three-dimensional fragment method for large-scale electronic structure calculations. Phys. Rev. B 77: 165113.

Z. Zhao, J. Meza, and L.W. Wang. 2008. A divide-and- conquer linear-scaling three-dimensional fragment method for large-scale electronic structure calculations. J. Phys.: Condens. Matter 20: 294203.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube