First Full-Scale Simulation of Cat-Size Cortex is a Gordon Bell Prize Winner

November 19, 2009

By Linda Vu

Contact: cscomms@lbl.gov

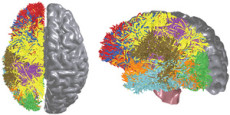

BlueMatter, a new algorithm created in collaboration with Stanford University, exploits the Blue Gene supercomputing architecture in order to noninvasively measure and map the connections between all cortical and sub-cortical locations within the human brain using magnetic resonance diffusion weighted imaging. Mapping the wiring diagram of the brain is crucial to untangling its vast communication network and understanding how it represents and processes information

Portland, Oregon— A team of researchers from the IBM Almaden Research Center and the Lawrence Berkeley National Laboratory won the prestigious Gordon Bell Prize in the special category for their development of innovative techniques that produce new levels of performance on a real application. This year's prize winners were announced Thursday, Nov. 19, 2009 at the awards session of the SC09 conference in Portland. The ACM Gordon Bell Prize annually recognizes the best performance of scientific applications on supercomputers.

To test the hypotheses of brain structure, dynamics and function, the team built a cortical simulator called C2 that incorporates a number of innovations in computation, memory, and communication as well as sophisticated biological details from neurophysiology and neuroanatomy, and tested it on Lawrence Livermore National Laboratory's (LLNL) "Dawn" Blue Gene/P supercomputer with 147,456 CPUs and 144 terabytes of main memory.

The IBM scientists also worked closely with researchers from Stanford University to noninvasively measure and map the connections between all cortical and sub-cortical locations within the human brain using magnetic resonance diffusion weighted imaging. This information was used to develop an algorithm that exploits the Blue Gene supercomputing interconnection architecture. Mapping the wiring diagram of the brain is crucial to untangling its vast communication network and understanding how it represents and processes information.

The algorithm, when integrated with the cortical simulator C2, allows scientists to experiment with various mathematical hypotheses of brain function and structure of how structure affects function as they work toward discovering the brain's core computational micro and macro circuits.

From left to right: Steven K. Esser, Horst D. Simon, Dharmendra S. Modha, Mateo Valero (Chair, Gordon Bell Prize Committee), Rajagopal Ananthanarayanan

"Ultimately we would like to see a computer that works as quickly and efficiently as the human brain. Although we still have a long way to go, this achievement indicates that we are making significant strides toward that goal," says Horst Simon of the Berkeley Lab who was a part of the collaboration. "For more than 20 years cognitive computing and supercomputing were operating in separate worlds. Our simulation brought the power of high performance computing to a whole new area of research."

With these innovations, the collaboration achieved the first near real-time cortical simulation of a brain containing 1 billion spiking neurons and 10 trillion individual learning synapses, which exceeds the scale of a cat cortex. The result marks significant progress toward creating a computer system that simulates and emulates the brain's abilities for sensation, perception, action, interaction and cognition, while rivaling the brain's low power and energy consumption and compact size. Building a cortical simulator of the size of the human cortex and operating in real time will require the computational power of more than an exaflop that is a 1000 times more capability than the fastest supercomputers today.

These advancements will provide a unique workbench for exploring the computational dynamics of the brain, and stand to move the team closer to its goal of building a compact, low-power synaptronic chip using nanotechnology and advances in phase change memory and magnetic tunnel junctions. The team's work stands to break the mold of conventional von Neumann computing, in order to meet the system requirements of the instrumented and interconnected world of tomorrow.

As the amount of digital data that we create continues to grow massively and the world becomes more instrumented and interconnected, there is a need for new kinds of computing systems—imbued with a new intelligence that can spot hard-to-find patterns in vastly varied kinds of data, both digital and sensory; analyze and integrate information real-time in a context-dependent way; and deal with the ambiguity found in complex, real-world environments.

Businesses will simultaneously need to monitor, prioritize, adapt and make rapid decisions based on ever-growing streams of critical data and information.

A cognitive computer could quickly and accurately put together the disparate pieces of this complex puzzle, while taking into account context and previous experience, to help business decision makers come to a logical response.

One essential element to the success of the project was the early access to the new Blue Gene P supercomputer called "Dawn", that was being installed at LLNL in the spring of 2009. "We are extremely pleased to have been able to help support the ground-breaking C2 simulations performed by the IBM/Berkeley Lab team. Not only were these simulations run at unprecedented scale for cortical simulations, they also provided National Nuclear Security Agency with additional ways to shake out the Dawn system's hardware and software by the runs utilizing all of the nodes and all of the memory," said Michel McCoy, Advanced Simulation and Computing (ASC) Program Director and Deputy Associate Director for High Performance Computing at LLNL.

In addition to Simon, other authors on the paper include Rajagopal Ananthanarayanan, Steven K. Esser and Dharmendra S. Modha of the IBM Almaden Research Center. Read their paper, "The Cat is Out of the Bag: Cortical Simulations with 10^9 Neurons, 10^13 Synapses."

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube