It's Not Too Late

Simulations show that cuts in greenhouse gas emissions would save arctic ice, reduce sea level rise

October 27, 2009

Contact: Jon Bashor , jbashor@lbl.gov, 510-486-5849

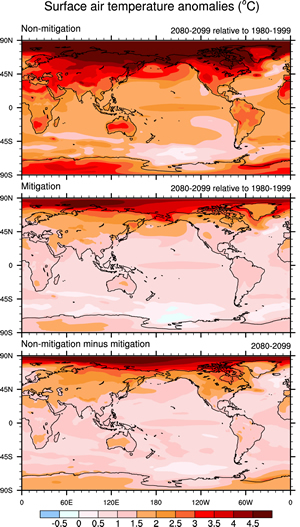

FIGURE 1. Computer simulations show the extent that average air temperatures at Earth’s surface could warm by 2080-2099 compared to 1980-1999, if (top) greenhouse gases emissions continue to climb at current rates, or if (middle) society cuts emissions by 70 percent. In the latter case, temperatures rise by less than 2°C (3.6°F) across nearly all of Earth’s populated areas (the bottom panel shows warming averted). However, unchecked emissions could lead to warming of 3°C (5.4°F) or more across parts of Europe, Asia, North America, and Australia. (Image: Geophysical Research Letters, adapted by LBNL.)

The threat of global warming can still be greatly diminished if nations cut emissions of heat-trapping greenhouse gases by 70 percent this century, according to a study led by scientists at the National Center for Atmospheric Research (NCAR). While global temperatures would rise, the most dangerous potential aspects of climate change, including massive losses of Arctic sea ice and permafrost and significant sea level rise, could be partially avoided.

"This research indicates that we can no longer avoid significant warming during this century," says NCAR scientist Warren Washington, the lead author. "But if the world were to implement this level of emission cuts, we could stabilize the threat of climate change and avoid an even greater catastrophe."

To simulate a century of climate conditions, the researchers used more than 2000 processors of Franklin, the National Energy Research Scientific Computing Center's (NERSC) Cray XT4 system, as well as computers at the Oak Ridge and Argonne Leadership Computing Facilities and at NCAR. Over the past two years, the NCAR team received a total allocation of 50 million processor hours on NERSC computers for a variety of climate studies.

Average global temperatures have warmed by close to 1 degree Celsius (almost 1.8 degrees Fahrenheit) since the pre-industrial era. Much of the warming is due to human-produced emissions of greenhouse gases, predominantly carbon dioxide. This heat-trapping gas has increased from a pre-industrial level of about 284 parts per million (ppm) in the atmosphere to more than 380 ppm today.

With research showing that additional warming of about 1 degree C (1.8 degrees F) may be the threshold for dangerous climate change, the European Union has called for dramatic cuts in emissions of carbon dioxide and other greenhouse gases. The U.S. Congress is also debating the issue.

To examine the impact of such cuts on the world's climate, Washington and his colleagues ran a series of global supercomputer studies with the NCAR-based Community Climate System Model (CCSM). They assumed that carbon dioxide levels could be held to 450 ppm at the end of this century. That figure comes from the U.S. Climate Change Science Program, which has cited 450 ppm as an attainable target if the world quickly adopts conservation practices and new green technologies to cut emissions dramatically. In contrast, emissions are now on track to reach about 750 ppm by 2100 if unchecked.

The team's results showed that if carbon dioxide were held to 450 ppm, global temperatures would increase by 0.6 degrees C (about 1 degree F) above current readings by the end of the century. In contrast, the study showed that temperatures would rise by almost four times that amount, to 2.2 degrees C (4 degrees F) globally above current observations, if emissions were allowed to continue on their present course (Figure 1).

Holding carbon dioxide levels to 450 ppm would have other impacts, according to the climate modeling study:

- Sea level rise due to thermal expansion as water temperatures warmed would be 14 centimeters (about 5.5 inches) instead of 22 centimeters (8.7 inches). Significant additional sea level rise would be expected in either scenario from melting ice sheets and glaciers.

- Arctic ice in the summertime would shrink by about a quarter in volume and stabilize by 2100, as opposed to shrinking at least three-quarters and continuing to melt. Some research has suggested the summertime ice will disappear altogether this century if emissions continue on their current trajectory.

- Arctic warming would be reduced by almost half, helping preserve fisheries and populations of sea birds and Arctic mammals in such regions as the northern Bering Sea.

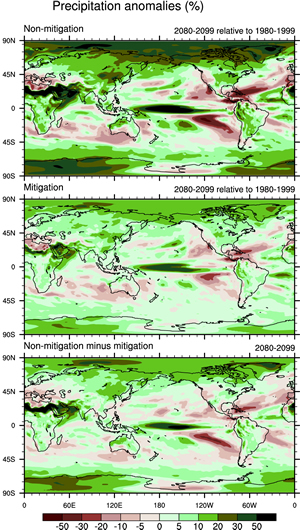

- Significant regional changes in precipitation, including decreased precipitation in the U.S. Southwest and an increase in the U.S. Northeast and Canada, would be cut in half if emissions were kept to 450 ppm (Figure 2).

- The climate system would stabilize by about 2100, instead of continuing to warm.

The research team used supercomputer simulations to compare a business-as-usual scenario to one with dramatic cuts in carbon dioxide emissions beginning in about a decade. The authors stressed that they were not studying how such cuts could be achieved nor advocating a particular policy.

"Our goal is to provide policymakers with appropriate research so they can make informed decisions," Washington says. "This study provides some hope that we can avoid the worst impacts of climate change—if society can cut emissions substantially over the next several decades and continue major cuts through the century."

Higher-resolution models

FIGURE 2. The non-mitigation case (top) shows increased precipitation in the northeast United States, Canada, and other regions, but a significant decrease in precipitation in the southwestern U.S. These changes are cut in half if greenhouse gas emissions are reduced by 70 percent (middle panel). The bottom panel shows the difference between the two scenarios, that is, the changes in precipitation that could be avoided by reducing emissions. (Image: Geophysical Research Letters, adapted by LBNL.)

CCSM is one of the world's most advanced coupled climate models for simulating the earth's climate system. Composed of four separate component models simultaneously simulating the earth's atmosphere, ocean, land surface, and sea ice, plus one central coupler component, CCSM allows researchers to conduct fundamental research into the earth's past, present, and future climate states.

CCSM version 3 was used to carry out the DOE/NSF climate change simulations for the Intergovernmental Panel on Climate Change Fourth Assessment Report (IPCC AR4), which was released in 2007. This breakthrough study presents a clear picture of a planet undergoing a rapid climate transition with significant societal and environmental impacts.

"The strength and clarity of the IPCC AR4 report can be attributed, in part, to DOE and NSF supercomputing centers making it possible to deploy climate models of unprecedented realism and detail," Washington says.

"Our next challenge is applying the emerging class of earth system models (CCSM4) that include detailed physical, chemical, and biological processes, interactions and feedbacks in the atmosphere, oceans, and surface, to carry out policy-relevant adaptation/mitigation scenarios. We will also use a higher resolution version of CCSM4 to perform decadal prediction experiments that can provide more regional information about climate change.”

For long-term studies with a resolution of 2 degrees for the atmosphere and 1 degree for the ocean, CCSM currently runs on more than 2000 processors of Franklin, NERSC's Cray XT4 system. At that resolution, more than 20 years of climate conditions can be simulated in one day of computing. Recent scaling improvements in the atmosphere component of the code have resulted in a doubling of throughput, allowing more processors to be used for faster simulations.

Higher-resolution runs (such as those used for decadal prediction studies) with a resolution of 0.5 degree for atmosphere and land, and 1 degree for ocean and sea ice, will run on up to 6000 processors, simulating about 2 years per day of computing, but offering much more detailed information about the regional impacts of global climate change.

Future work at NERSC by Washington's research group will include further climate change detection/attribution studies for the IPCC AR5 report. A series of simulations will be made to link the observed climate data to specific "forcings," or factors that influence climate. Ten different forcing combinations will be investigated and compared with observed data for the years 1850–2005. The forcing combinations are:

- Natural forcings only

- Anthropogenic forcings only

- Solar forcing only

- Volcanic forcing only

- Greenhouse gas forcing only

- Ozone forcing only

- Dust forcing only

- Aerosol direct forcing only

- Aerosol indirect forcing only

- Land-cover changes only.

Other research topics for the coming year will include the impact of the 11-year solar cycle, the impact of collapse of the meridional overturning circulation, climate feedbacks due to permafrost-thaw related methane emissions, and decadal predictability experiments.

This article written by: David Hosansky and Rachael Drummond, UCAR; and John Hules, Berkeley Lab.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube