NERSC Contributes to EMGeo Mapping Software for Finding Hidden Oil and Gas Reserves

September 30, 2009

Contact: Linda Vu, lvu@lbl.gov, 510-495-2402

As the world's demand for energy increases, billions of dollars are dedicated to the search for deep-water hydrocarbon reservoirs each year. Although seismic imaging methods have long been used to collect valuable information on geological structures bearing hydrocarbon deposits, they have not proven effective in discriminating different types of reservoir fluids, such as brines, oil, and gas. Because of this inability to discriminate, over time huge financial losses result from drilling dry holes—up to 100 million dollars per each unsuccessful drilling. Meanwhile significant hydrocarbon reservoirs remain undiscovered.

As the world's demand for energy increases, billions of dollars are dedicated to the search for deep-water hydrocarbon reservoirs each year. Although seismic imaging methods have long been used to collect valuable information on geological structures bearing hydrocarbon deposits, they have not proven effective in discriminating different types of reservoir fluids, such as brines, oil, and gas. Because of this inability to discriminate, over time huge financial losses result from drilling dry holes—up to 100 million dollars per each unsuccessful drilling. Meanwhile significant hydrocarbon reservoirs remain undiscovered.

This limitation has led to the development of new geophysical technologies, specifically the use of low-frequency electromagnetic energy to complement seismic methods. In contrast to seismic data, electromagnetic measurements have been shown to be highly sensitive to changes in fluid types and location of hydrocarbons down to depths of four kilometers below the ocean floor. However, successfully extracting and processing the information from electromagnetic data has proved up to now to be a formidable problem.

Now, a new interpretative software called EMGeo (ElectroMagnetic Geological Mapper) leverages the unique capabilities of massively parallel computers to create 3D electrical conductivity maps of deep-water hydrocarbon reservoirs and their surrounding geology in unprecedented detail. Using sophisticated parallelization schemes, the software can scale beyond tens of thousands of processor cores to handle exceptionally large datasets.

Developed by Gregory Newman and Michael Commer of the Lawrence Berkeley National Laboratory's (Berkeley Lab) Earth Sciences Division, the technology was extensively tested and developed on some of the world's most powerful supercomputers, including the National Energy Research Scientific Computing (NERSC) Center's Franklin system. EMGeo was recently honored with a 2009 R&D 100 Award.

"This software exploits 21st century massively parallel computing resources to show investigators where hydrocarbon deposits reside, which can be covered by ocean over a mile deep and several more miles of rock below the ocean floor. This technology has lead to new detection abilities, new efficiencies and new savings," says Newman.

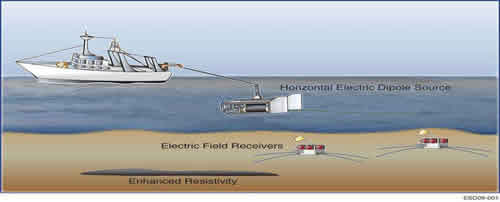

This picture illustrates how offshore reservoirs are detected by the CSEM method.

A New Map in 3D

Because offshore hydrocarbon reservoirs reside in highly complex geological environments beneath the ocean, it is extremely difficult to locate them without creating 3D computer models of the reservoirs and surrounding geology.

Fluids like oil and gas are typically characterized by a lower electrical conductivity than brines and water. Experts use this phenomenon to their advantage by using controlled source electromagnetic (CSEM) methods to effectively differentiate between different types of offshore reservoir fluids. This method measures electromagnetic fields with sensors on the ocean floor. A deep-towed transmitting antenna then generates low-frequency electromagnetic fields, which are detected by the sensors. Both antenna tow lines and sensor arrays can cover areas ranging to thousands of square kilometers.

In addition to artificially created CSEM signals, the sensors also record magnetotelluric (MT) signals. MT fields are natural electromagnetic fields that arise when solar winds interact with the earth's magnetosphere, thus providing extra information about the subsurface. Instead of analyzing these different electromagnetic data types separately, which is still common today, EMGeo can produce electrical conductivity maps from combined data sets, a technique also referred to as joint imaging.

"The maps become less ambiguous as you incorporate more datasets with complementing information," says Commer.

The problem is that multi-component data volumes also require huge computational resources, and mapping subtle reservoirs within a complex offshore geology typically requires multiple 3-D imaging experiments.

According to Commer, EMGeo has the unique capability of distributing the computational load over groups of parallel processors in a hierarchical way. Both the computational model domain and the data volumes are distributed over multiple processor groups in a way that enables the imaging problem to be solved on arbitrarily large parallel computer systems. He notes that the parallel code architecture as well as efficient modeling grid design strategies allow investigators to explore a wide range of geologies, from marine to borehole environments. This framework could also potentially be very useful for environmental restoration as well as the search for alternative energies.

Developing the Code

Mahogany prospect in the Gulf of Mexico in horizontal xz slices. Original model (top left), CSEM data (top right), MT data (bottom left) and joint data showing depths from 0 to 6 km (bottom right). This research was done on NERSC's Cray TX4 system, named Franklin.

In an imaging experiment carried out on the Blue Gene/L supercomputer at the IBM T. J. Watson Research Center, EMGeo scaled up to 32,768 processors. The image obtained from a large CSEM data set covered an area of 1600 square kilometers and provided insights into the complex geology of Brazil's Campos Basin, a petroleum rich area located offshore of Brazil.

"EMGeo has been designed to scale well beyond these numbers, to hundreds of thousands of processors, however its scaling power has yet to be fully demonstrated because its processing power exceeded the resources of the largest massively parallel computer systems now in existence," says Newman.

Since the IBM runs, Newman and Commer have also continuously used 8,000 cores on NERSC's Franklin system to experiment with further innovations in EMGeo. They were specifically interested in exploring how the software handles multiple types of datasets.

"The throughputs that we got in our Franklin runs were phenomenal. We were able advance our codes much faster because we were getting results out of the machine so quickly," says Newman.

He adds that access to massively parallel machines like Franklin has been crucial to the development of this EMGeo software, and using the next generation of DOE supercomputers will be even more vital as the development of the software's next generation addresses a wider class of problems in the scientific community.

Commer and Newman will formally accept their R&D 100 award at a ceremony in Orlando, Florida this November.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube