News

Machine Learning Opens New Doors in Traumatic Brain Injury Research

In a paper published in Nature Scientific Reports, researchers from Berkeley Lab, UCSF, the Medical College of Wisconsin, and UC Berkeley – in conjunction with the TRACK-TBI collaboration – describe how an innovative machine learning approach can enhance the prognosis and understanding of traumatic brain injury and other complex medical conditions.

Read More »

In a Warming World, Climate Scientists Consider Category 6 Hurricanes

As increasing ocean temperatures contribute to more intense and destructive hurricanes, climate scientists wonder whether the open-ended Category 5 on the Saffir-Simpson Windscale is sufficient to communicate the risk of hurricane damage in a warming climate. Read More »

50 Years of NERSC Firsts

A team of researchers from Berkeley Lab’s CAMERA developed a new tomographic reconstruction algorithm, TomoCAM, that leverages advanced mathematical techniques and GPU-based computing. Their method set a new world record by surpassing the speed of existing state-of-the-art iterative tomographic reconstruction algorithms. Read More »

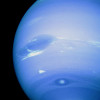

Perlmutter Provides Peek into Interior of Ice Giant Planets

Researchers at UC Berkeley used the Perlmutter supercomputer at NERSC to make progress toward a better understanding of chemistry inside ice giant planets, a step forward for planetary science that may also have applications on Earth. Read More »

New Tomographic Reconstruction Algorithm Developed at Berkeley Lab Sets World Record

A team of researchers from Berkeley Lab’s CAMERA developed a new tomographic reconstruction algorithm, TomoCAM, that leverages advanced mathematical techniques and GPU-based computing. Their method set a new world record by surpassing the speed of existing state-of-the-art iterative tomographic reconstruction algorithms. Read More »

NERSC Celebrates 50 Years in 2024

In 2024, NERSC will celebrate the latest of many milestones: 50 years of service to the global science community. Read More »

Berkeley Lab Researchers Publish Pioneering Book on Autonomous Experimentation

Just as auto-complete was revolutionary for text composition, autonomous experimentation will change how experiments are performed. Berkeley Lab’s Marcus Noack and Daniela Ushizima published the first-ever book dedicated to this topic. Read More »

NERSC 50th Anniversary Among CSA Highlights at SC23

NERSC director Sudip Dosanjh kicked off NERSC’s 50th anniversary year in front of a crowd of NERSC users, staff, and fans in the DOE booth at the SC23 conference November 12–17 – just one highlight among many for the CS Area throughout the week. Read More »

CSA Staff Bring Diverse Research Efforts to 2023 AGU Fall Meeting

When approximately 25,000 attendees convene in San Francisco for the 2023 American Geophysical Union’s Fall Meeting, a number of Berkeley Lab Computing Sciences Area staff will be among them, giving invited talks, presenting papers, and showing posters. The meeting will be held from December 11-15 at Moscone Center and online. Read More »

AMCR’s Wehner Explores Impact of ‘Extreme Event Attribution’ on Climate Science Research

Michael Wehner, a senior scientist in the AMCR Division, has spent the last two decades talking to colleagues, policy makers, and the general public about the realities of climate change. But lately, the conversation itself has been changing. Read More »

Instagram

Instagram YouTube

YouTube