Berkeley Lab Scientists Get Time on Nation's Fastest Computer

To Advance Research in Cleaner, Renewable Energy Technologies

November 30, 2010

by Jon Bashor

BERKELEY, Calif.—Scientists at the Department of Energy's (DOE) Lawrence Berkeley National Laboratory (Berkeley Lab) have been awarded massive allocations on the nation's most powerful supercomputer to advance innovative research in improving the combustion of hydrogen fuels and increasing the efficiency of nanoscale solar cells. The awards were announced today (Tuesday, Nov. 30) by Energy Secretary Steven Chu as part of DOE’s Innovative and Novel Computational Impact on Theory and Experiment (INCITE) program.

The INCITE program selected 57 research projects that will use supercomputers at Argonne and Oak Ridge national laboratories to create detailed scientific simulations to perform virtual experiments that in most cases would be impossible or impractical in the natural world. The program allocated 1.7 billion processor-hours to the selected projects. Processor-hours refer to how time is allocated on a supercomputer. Running a 10-million-hour project on a laptop computer with a quad-core processor would take more than 285 years.

“The Department of Energy's supercomputers provide an enormous competitive advantage for the United States,” said Secretary Chu. “This is a great example of how investments in innovation can help lead the way to new industries, new jobs, and new opportunities for America to succeed in the global marketplace.”

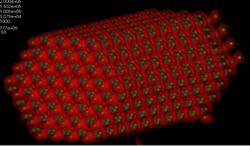

Simulation of a lean hydrogen-air mixture burning in a low-swirl injector. The colors indicate the presence of nitric oxide emissions near the highly wrinkled flame, while the gray structures at the flame base show the turbulent vorticity generated near the breakdown of the swirling flow from the injector.

Reducing Dependence on Fossil Fuels

One strategy for reducing U.S. dependence on petroleum is to develop new fuel-flexible combustion technologies for burning hydrogen or hydrogen-rich fuels obtained from a gasification process. John Bell and Marcus Day of Berkeley Lab's Center for Computational Sciences and Engineering, were awarded 40 million hours on the Cray supercomputer "Jaguar" at the Oak Ridge Leadership Computing Facility (OLCF) for "Simulation of Turbulent Lean Hydrogen Flames in High Pressure" to investigate the combustion chemistry of such fuels.

Hydrogen is a clean fuel that, when consumed, emits only water and oxygen making it a potentially promising part of our clean energy future. Researchers will use the Jaguar supercomputer to better understand how hydrogen and hydrogen compounds could be used as a practical fuel for transportation and power generation.

Nanomaterials Have Big Solar Energy Potential

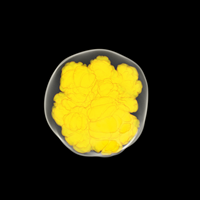

ZnO nanorods could serve as the foundation of future electronics. But scientists must first understand their many unique qualities. Scientists can do this by running the linear scaling three-dimensional fragment method (LS3DF) on a supercomputer. This image is a ZnO nanorod calculated with LS3DF. With this computation, scientists found the structure's large surface dipole momentis, a property that significantly changes its internal electronic structure.

Nanostructures, tiny materials 100,000 times finer than a human hair, may hold the key to improving the efficiency of solar cells—if scientists can gain a fundamental understanding of nanostructure behaviors and properties. To better understand and demonstrate the potential of nanostructures, Lin-Wang Wang of Berkeley Lab's Materials Sciences Division was awarded 10 million hours on the Cray supercomputer at OLCF. Wang's project is "Electronic Structure Calculations for Nanostructures."

Currently, nanoscale solar cells made of inorganic systems suffer from low efficiency, in the range of 1–3 percent. In order for the nanoscale solar cells to have an impact on the energy market, their efficiencies must be improved to more than 10 percent. The goal of Wang's project is to understand the mechanisms of the critical steps inside a nanoscale solar cell, from how solar energy is absorbed, then converted into usable electricity. Although many of the processes are known, some of the corresponding critical aspects of the nanosystems are still not well understood.

Because Wang studies systems with 10,000 atoms or more, he relies on large-scale allocations such as his INCITE award to advance his research. To make the most effective use of his allocations, Wang and collaborators developed the Linearly Scaling Three Dimensional Fragment (LS3DF) method. This allows Wang to study systems that would otherwise take over 1,000 times longer on even the biggest supercomputers using conventional simulation techniques. LS3DF won an ACM Gordon Bell Prize in 2008 for algorithm innovation.

Advancing Supernova Simulations

Type 1a supernova simulation developed using the CASTRO code. Image by Haitao Ma of UC Santa Cruz

Berkeley Lab's John Bell is also a co-investigator on another INCITE project, "Petascale Simulations of Type Ia Supernovae from Ignition to Observables." The project, led by Stan Woosley of the University of California-Santa Cruz, uses two supercomputing applications developed by Bell's team—MAESTRO, to model the convective processes inside certain stars in the hours leading up to ignition—and CASTRO to model the massive explosions known as Type Ia supernovas. The project received 50 million hours on the Cray supercomputer at OLCF.

Type Ia supernovae (SN Ia) are the largest thermonuclear explosions in the modern universe. Because of their brilliance and nearly constant luminosity at peak, they are also a "standard candle" favored by cosmologists to measure the rate of cosmic expansion. Yet, after 50 years of study, no one really understands how SN Ia supernovae work. This project aims to use these applications to model the beginning-to-end processes of these exploding stars.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube