Taking the 'Large' out of Large Hadron Collider

Computational breakthrough hastens modeling of 'tabletop accelerators'

August 9, 2010

By Margie Wylie

Contact: cscomms@lbl.gov

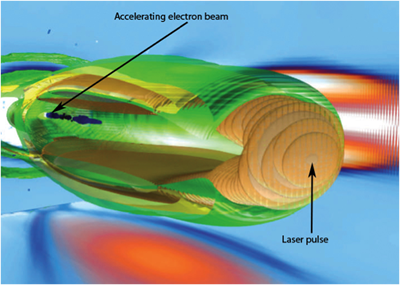

This 3D simulation shows how laser pulses create plasma wakes that propel electrons forward, much as a surfer is propelled forward by an ocean wave. Laser wakefield acceleration promises electron accelerators that are thousands of times more powerful than, yet a fraction the size of, conventional radio frequency devices.

Particle accelerators like the Large Hadron Collider (LHC) at CERN are the big rock stars of high-energy physics—really big. The LHC cost nearly USD$10 billion to build and its largest particle racetrack (27 km in circumference) stretches across a national border. However, a recent breakthrough in computer modeling may help hasten the day when accelerators thousands of times more powerful can be built in a fraction of the space—and for significantly less money.

Researchers computing at the National Energy Research Scientific Computing (NERSC) Center have sped up by a factor of hundreds the modeling, and thus the design of experimental laser wakefield accelerators. The team, lead by principal investigator Warren Mori of the University of California at Los Angeles and his collaborator Luis Silva of the Instituto Superior Tecnico (IST) in Lisbon, Portugal, published its findings in Nature Physics in April.

Laser wakefield acceleration works by shooting powerful laser pulses through a cloud of ionized gas (plasma). The pulse creates a wave (or wake) on which introduced electrons "surf," much as human surfers ride ocean waves. Using this method, researchers have demonstrated acceleration gradients 1,000 times greater than conventional methods.

Experiments using laser wakefields have so far spanned no more than a few centimeters, but if scientists successfully extend that to meters and can string several laser wakefield stages together, an accelerator more powerful than the LHC would theoretically require a wake path only 10-100 meters long. Of course, there's a lot more to sophisticated colliders than raw acceleration, said Mori. That's why the most immediate (and perhaps greatest) promise of the technology lay in the many other uses of electron accelerators today.

Computer simulations play a critical role in designing laser wakefield experiments, allowing researchers to explore designs before building and testing them, and avoiding some potentially costly mistakes along the way. However, completing the calculations for modeling longer wakes produced by lasers, around a meter, would require a prohibitive amount of computing power, not to mention time.

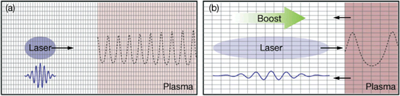

To address this problem, a team led by Mori and Silva turned to a quirk of special relativity. Here's how it works: When objects are moving at or near the speed of light, each experiences time, space, sometimes even event-order differently. Yet even in this mixed up state, the same laws of physics apply. So, rather than carrying out their computer simulations in a standard frame of reference—the plasma being more or less stationary and the laser beam moving through it at the speed of light—the researchers used a moving frame. That is, they simulated the plasma moving towards the beam and just under the speed of light.

Due to a relativistic effect called Lorentz contraction, the plasma in this configuration shrinks ten times shorter and the laser's wavelength grows ten times longer. In this "Lorentz-boosted" frame of reference, the code need calculate only about 1/100th the number of time-slices normally necessary to create a simulation. Depending on the simulation, Mori's team was able calculate simulations at rates 100 to 300 times faster than in a standard frame-of-reference. That's something like recording a 100-minute feature film in a minute or less.The Lorentz-boosted frame makes it possible to simulate longer stretches of plasma than ever. "With this code we can simulate lasers that aren't yet possible to build," said Silva. That's crucial to scientists who are racing to build lasers powerful enough to keep electrons surfing longer and thus reaching higher speeds. The team's code can help inform critical design decisions: "For example, you could tightly focus a laser or make the spot size wider; there are tradeoffs to doing each of these for a given laser that could be developed," said Silva." Speeding up simulations lets researchers test a wider range of possibilities in less time."

|

| How Lorentz boosted frames work. This illustration shows a numerical grid for a laser wakefield accelerator simulation in two different frames-of-reference: A standard laboratory frame (a) at left and a relativistic Lorentz boosted frame (b) at right. On the left, the plasma (the pink-colored section of the grid) is stationary. On the right, the plasma is moving near the speed of light towards the laser pulse. The dotted line represents the wake on which electrons “surf” to high energies. In the boosted frame, the plasma contracts, becoming denser, while both the laser and its wake through the plasma stretch out. The boosted frame still calculates the same number of points per wavelength, but for fewer waves, reducing by a factor of hundreds the number of calculations required. |

That being said, the technique is still compute-intensive, said Samuel Martins, a doctoral candidate at IST and researcher in charge of running the simulations at Berkeley Lab's NERSC. That's where supercomputers come in: "The scheme reduces the time a simulation takes to run, but it doesn't reduce the total memory required, so you typically still need a large number of processors," said Martins. The team computed on NERSC's Franklin supercomputer using up to 16,000 processors simultaneously for a total of about 2 million processor hours. "Our experience with NERSC was very positive, particularly after the initial set up period of the Franklin supercomputer," said Martins. "The technical staff were helpful and worked with us to fine tune our OSIRIS framework for the NERSC supercomputers."

The idea of using a Lorentz-boosted frame to speed up these type of calculations was initially explored in the 90's by Mori's group at UCLA, but, after unsuccessful attempts to apply the technique, the project was abandoned. In 2007, Jean-Luc Vay of Berkeley Lab was the first to successfully apply Lorentz-boosted frames to particle-beam interactions. The publication of his work, which suggested the technique could be used to model free electron lasers and laser-plasma accelerators, set off a flurry of related work and inspired Mori, Silva and team to try again. As a result, they successfully applied the concept to laser-plasma accelerators through their own modeling code, called OSIRIS. Since Vay's 2007 publication, the Lorentz-boosted frame has been adopted by laser-plasma accelerator designers around the world.

Related links

Reframing Accelerator Simulations

Laser Wakefield Acceleration: Channeling the best beams ever

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube