Visualizing Oil Dispersion

Software Developed by Berkeley Lab Computational Researchers Provides Insights about Pollutant Transport near Deepwater Horizon Site

October 31, 2012

Contact: Linda Vu, lvu@lbl.gov, +1 510 495 2402

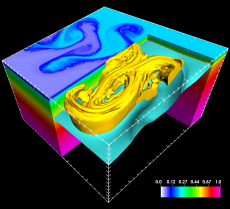

Using software developed by Berkeley Lab computer scientists, FTLE in VisIt, researchers studying currents in the Gulf of Mexico found that small-scale turbulence in the water directly impacts the transport of pollutants. In this case, the small-scale turbulence is depicted as a yellow swirl on top of the teal cutout. (Image courtesy of Tamay Özgökmen, University of Miami)

In April 2010, an explosion at the Deepwater Horizon offshore oil rig caused about 53,000 barrels of crude oil to spew into the Gulf of Mexico every day for nearly three months. At the time, several computer models predicted that ocean currents would eventually carry this oil thousands of miles across the Atlantic seaboard—a scenario that could devastate economies and ecosystems across the Eastern United States.

But when these forecasts didn’t pan out, scientists realized that their computer models were missing some critical information. Using visualization software developed for the fusion energy research community by computer scientists at the Lawrence Berkeley National Laboratory (Berkeley Lab), oceanographers found that they needed to factor in the interactions between deep, middle and surface ocean currents to successfully track oil dispersion in the Gulf of Mexico.

“We originally developed these visualization techniques to help physicists understand how magnetic fields influence the velocity of particles inside of a tokamak. The fact that our work also helps oceanographers study oil dispersion in the Gulf of Mexico goes to show the far-reaching impact of this Department of Energy (DOE) investment,” says Hank Childs, a staff scientist in the Berkeley Lab’s Visualization Group.

The Challenge: Modeling Dispersion

“Because the Deepwater Horizon incident was a scenario where oil spews from a very small area 1500 meters at the ocean’s bottom, there are many complex variables to consider when forecasting dispersion,” says Tamay Özgökmen, professor at the University of Miami’s Rosenstiel School of Marine and Atmospheric Science.

He notes that researchers need to take into account how deep ocean currents (influenced by Earth’s rotation), water temperature differences, and the complex interactions between the deep, middle and shallow ocean, affect the oil’s rise to the surface. They must also factor in the role of atmospheric and surface ocean currents on pollutant dispersion.

With a better understanding of the mechanisms driving ocean currents, scientists can refine their computer models to accurately forecast dispersion, which in turn allows emergency responders to quickly develop strategies for containing the region’s next oil spill. This is precisely what the Consortium for Advanced Research on the Transport of Hydrocarbons in the Environment (CARTHE) is setting out to do with support from the Gulf of Mexico Research Initiative (GoMRI), BP’s 10-year, $500 million commitment to independent research into the Deepwater Horizon incident.

Using software developed by Berkeley Lab computer scientists, CARTHE researchers gained greater insights into the transport characteristics of multi-scale oceanic flows. The researchers applied this result to design an experiment for understanding how surface currents in the Gulf of Mexico transport pollutants. Earlier this year, the team released more than 300 custom-made buoys near the Deepwater Horizon site to study how surface currents in the Gulf transport pollutants. Now, they are collecting valuable information about where pollutants go and how fast they get there.

“The ocean is very big, and the oil could either stay in the Gulf of Mexico, head toward the Atlantic Ocean or New Orleans. To contain this and future spills, we have to track how the pollutants move with various currents,” says Özgökmen, who also heads CARTHE. “So we run more than eight different models to see how turbulence, flow-fields and structures within those fields, drive currents. Visualization is crucial for analyzing this data, and VisIt made this process very easy.”

Seeing Is Understanding

Software developed by Berkeley Lab computer scientists, FTLE in VisIt, helped researchers studying currents in the Gulf of Mexico get a better insight into the transport characteristics of multi-scale oceanic flows. The researchers applied this result to design an experiment for understanding how surface currents in the Gulf of Mexico transport pollutants. Earlier this year, they unleashed 300 custom made buoys near the Deepwater Horizon site and they are currently collecting valuable information about where pollutants go and how fast they get there. (Images courtesy of Gulf of MexIco Research Institute)

Recently, Childs and his Berkeley Lab colleague Hari Krishnan collaborated with physicists at the Massachusetts Institute of Technology to incorporate a tool called FTLE (finite-time Lyapunov exponents) into VisIt. The tool allows researchers to characterize fluid flow by showing how two or more particles move away from each other as time progresses.

In theory, physicists could produce an inexhaustible stream of energy by the same process that powers the sun: fusion. Heating up hydrogen atoms to more than 100 million degrees centigrade causes them to become a gaseous stew of electrically charged particles, called plasma. Powerful magnets confine and compress these particles until they fuse, releasing energy in the process.

Unfortunately, scientists do not currently understand the behavior of plasma well enough to effectively confine it. VisIt’s new capabilities could help them move closer to this goal. By visualizing how two or more particles move away from each other in time, physicists can characterize how magnetic fields influence the behavior of particles inside a tokamak, a doughnut-shaped “pot” used to contain plasma.

As it turns out, this functionality is also extremely useful for studying ocean currents and tracking oil dispersion in the Gulf of Mexico. “With a few clicks we released a bunch of particles into a field, and advected them forward and backward in time to see the structures driving it,” says Özgökmen. “VisIt allows us to visualize this in 2D or 3D, and the best part is that all of these capabilities are contained in one piece of software.”

“Physicists studying nuclear fusion and oceanographers predicting pollutant dispersion in the Gulf of Mexico may be looking for different things, but from a computational science perspective their work falls into the field of flow visualization,” says Krishnan. “This is not uncommon, there are a lot of scientists out there that directly benefit from work that we’ve done with others. We build these capabilities into VisIt and let the users decide if they want to use them.”

Özgökmen’s team recently published a paper on their work in Ocean Modelling. Childs and Krishnan were both co-authors on the paper entitled, “On Multi-scale Dispersion under the Influence of Surface Mixed Layer Instabilities and Deep Flow.”

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube