Organic Photovoltaics Experiments Showcase ‘Superfacility’ Concept

ALS, CRD, NERSC, ESnet Collaborate to Enable On-The-Fly HPC Data Analysis at Multiple Sites

March 17, 2015

Contact: Kathy Kincade, +1 510 495 2124, kkincade@lbl.gov

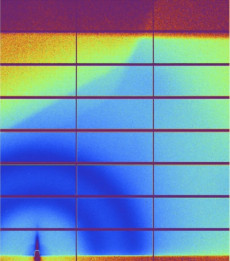

This image, taken from SPOT Suite during an organic photovoltaics data-analysis experiment at Berkeley Lab's Advanced Light Source in September 2014, shows a GISAXS pattern from a printed organic photovoltaic sample as it dried in the beamline. Images: Craig Tull

A collaborative effort linking the Advanced Light Source (ALS) at Lawrence Berkeley National Laboratory (Berkeley Lab) with supercomputing resources at the National Energy Research Scientific Computing Center (NERSC) and Oak Ridge National Laboratory via the Energy Sciences Network (ESnet) is yielding exciting results in organic photovoltaics research that could transform the way researchers use these facilities and improve scientific productivity in the process.

A series of organic photovoltaics experiments conducted at the ALS last September provided an opportunity to roadtest the “superfacility” concept, which seamlessly integrates multiple Department of Energy (DOE) Office of Science user facilities to allow researchers to tap into scientific resources and expertise and analyze and share data with other users—all in real time and without having to leave the comfort of their office or lab.

“A superfacility is two or more facilities interconnected by means of a high performance network specifically engineered for science and linked together using workflow and data management software such that the scientific output of the connected facilities is greater than it otherwise could be,” said Eli Dart, a network engineer at ESnet, the DOE’s high-performance network. “This represents a new experimental paradigm that allows HPC resources to be brought to bear on experimental work being done at a light source beamline within the context of the experimental run itself. If scientists can make better use of their beam time—a very scarce resource—then the scientific value of the facility is dramatically increased.”

Analyzing Organic Photovoltaics

During the Science Data Pilot Projects program in the DOE booth at the SC14 conference last November, the Berkeley Lab team described how such a superfacility could be used to enhance scientific productivity—in this case, the development of a new method for fabricating organic photovoltaics (OPV) that could enable large-scale manufacture of these devices.

A more flexible material than traditional photovoltaics, OPVs hold promise for reducing energy costs and increasing conversion efficiency. But mass-producing OPVs has proven to be a challenge; most efforts to fabricate them have focused on spin-coating, a process often used in laboratory testing that does not lend itself to large-scale fabrication and significantly reduces the power conversion efficiencies (PCE) of the devices.

This hurdle prompted Alexander Hexemer, a staff scientist in the ALS’s Experimental Systems Group, and his research team to take a different approach: slot-die coating, a process commonly used in the commercial roll-to-roll manufacture of photovoltaics. Hexemer and Cheng Wang, together with Thomas Russell from UMass Amherst, developed a mini slot-die coating system to fabricate OPVs to understand the processing difference to those materials that are currently spin-coated (PCEs above 10 percent).

“We built a miniature slot-die printer that we believe mimics roll to roll,” Hexemer said. “It consists of an actual industrial printer head we modified to fit in the beamline. A solvent polymer mixture is fed to the printer and deposited on silicon. We can also mix different solutions and then feed them into the printer head.”

Collaboration Critical

To study changes in the OPV’s morphology during the printing process, they used the slot-die printer in conjunction with grazing-incidence small-angle X-ray scattering (GISAXS), an experimental technique used to probe nanostructures in polymers, and HipGISAXS, a flexible, massively parallel GISAXS simulation software package designed to “fit” the data in real time. SPOT Suite, a suite of data management, data analysis and simulation tools developed by Berkeley Lab’s Computational Research Division (CRD), orchestrated the data workflow, incorporating the HipGISAXS algorithms developed at the Lab’s Center for Advanced Mathematics for Energy Research Applications, known as CAMERA.

During a series of beamline experiments at the Advanced Light Source, the SPOT Suite portal developed by Berkeley Lab's Computational Research Division helped researchers manage real-time data transfers to NERSC via ESnet and then on to Oak Ridge (Titan) and back to NERSC via Globus.

“To manage big data you need several things: fast algorithms, really good software and workflow management tools, which in this case was SPOT Suite and CAMERA,” Hexemer said. “I know the scattering stuff, but we need the NERSC and CRD collaboration to build the real-time workflow for this.”

During an experiment in September 2014, scientists at the ALS prepared samples using OPV in a solution and then began simultaneously printing the OPVs and acquiring beamline data of the samples during the process. During the experiment, this data was transferred to NERSC via ESnet, where it was put into the SPOT Suite portal and underwent reduction and remeshing to translate pixels into the correct reciprocal space. Using the Globus data management tool developed by the University of Chicago and Argonne National Laboratory, the output from those jobs was then sent to the Oak Ridge Leadership Computing Facility for analysis, where it ran on the Titan supercomputer for six hours nonstop on 8,000 GPUs.

HipGISAXS—a flexible, massively parallel GISAXS simulation software package—was used to “fit” the beamline's scattering data in real time.

“The typical way researchers have used NERSC and Titan in the past is they are perceived as independent facilities used for particular purposes, and coordinating one with the other is kind of a manual thing in terms of scheduling time, etc.,” said Craig Tull, who heads Berkeley Lab’s Physics and X-Ray Science Computing Group and coined the term "superfacility" to describe the linking of multiple facilities. “What we did here that was really unique was we were co-scheduling beamline time at the ALS and time and resources at NERSC and Oak Ridge, plus ESnet to string it all together. This was the first time we’d done anything like this with a beamline taking data and working on large-scale analysis on a supercomputer at the same time, the first time anybody had done anything like this.”

By capturing an image of the solution every second for five minutes at a time, the researchers were able to measure scattering patterns and watch the structures crystallize during the drying process. While the team was excited by these results, the amount of data generated during the experiments proved a challenge.

“The problem is the amount of data you get with this is absolutely staggering,” said Hexemer, who presented results from the ALS experiments at the March 2015 meeting of the American Physical Society. In three days they collected more than 36,000 images, of which 865 were pulled out for analysis. In fact, the numbers of data files were so large and the complexity of the data so high that the researchers were not able to do real-time analysis during the experiments. They are now working to further optimize their algorithms to enable this capability in future beamline experiments.

“I think this is reflective of an entire category of real-time computing problems, at large data rates, that many, many scientific disciplines will be facing in the future,” Tull said. “We are really trying to understand how to expand their portfolio to address this emerging need.”

“The superfacility model is not just hard assets, not just networks, computers and beamlines—it is getting all the people together,” Dart said. “What the national lab complex does is maintain a brain trust for the country. By linking these facilities together in this way, you get the advantage of being able to bring that expertise to bear on a problem right now. And if you discover that you need to do this repeatedly, then you operationalize it so that each individual instance of it receives that expert attention. We’re not all the way there yet, but we’re working on it.”

Related Reading:

Photon Speedway Puts Big Data in the Fast Lane

Spot Suite Transforms Beamline Science

DOE Scientists Team Up to Demonstrate Scientific Potential of Big Data Infrastructure

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube