The Rise and Fall of Core-Collapse Supernovae

2D and 3D Models Run at NERSC and ORNL Shed New Light on What Fuels an Exploding Star

July 2, 2015

Contact: Kathy Kincade, +1 510 495 2124, kkincade@lbl.gov

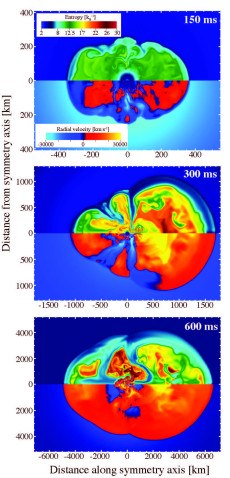

2D simulations of the evolution of the entropy (upper half) and radial velocity (lower half) of a supernovae explosion at 150, 300 and 600 milliseconds. Red material is moving outward, blue material is moving inward. Simulations run at NERSC (Hopper and Edison) using Chimera, a core-collapse supernovae simulation code. Image: Raph Hix

Few events in the cosmos are as powerful as the explosion of a massive star in a supernova. For several weeks, the brightness of that single event can rival that of an entire galaxy. As a result, supernovae of various types are often used as "standard candles" in determining the size and expansion rate of a universe powered by dark energy.

Despite decades of research, however, understanding the explosion mechanisms of supernovae remains among the great challenges of astrophysics—in part because of the complexity of the computations involved. For core-collapse supernovae, which mark the death of a massive star, the heart of the matter—the core, if you will—lies in teasing apart the physics of the neutrinos that are produced in the neutron star that is born during the supernova explosion.

“The numerical study of supernova is a subject that goes back almost 50 years,” said Raphael Hix, an astrophysicist at Oak Ridge National Laboratory (ORNL) who studies core-collapse supernovae. “The challenge has always been that the real problem is much more complicated than we can fit in any computer we have. So with each new generation of computers, we get to do the problem with a little more realism than before.”

A wide variety of stars can die as core-collapse supernovae, stars anywhere from 10 to perhaps 40 solar masses in size with different internal structures, Hix explained. Furthermore, stars being formed today in our galaxy are not the same as the stars of the same mass formed 13 billion years ago at the beginning of the universe.

“Our ability to wander through the parameter space of these kinds of stars that die is very limited in 3D, even with good-sized allocations on the fastest machines in the world. It’s too expensive,” Hix said. “So we’ve been trying to do one or two 3D simulations a year and then dozens of 2D simulations to help us figure out what the important 3D simulations are.”

Comparisons Critical

In a study published in AIP Advances, Hix and colleagues from ORNL, University of Tennessee, Florida Atlantic University and North Carolina State University compared 3D models run at ORNL with 2D models run at NERSC to shed new light on the explosion mechanism behind core-collapse supernovae.

“The principal difference in the supernova research we’re doing now, compared to the previous generation (on earlier supercomputers), is spectral neutrino transport,” Hix said. “In the past, in multidimensional simulations, you would have treated neutrinos in terms of something like the mean temperature or energy, a single parameter for the whole spectrum of neutrinos as a function of space. But now we actually keep track of more than 20 different bins of neutrinos at different energies.”

This advance led Hix and his co-authors to realize that previous core-collapse supernovae models overestimated the speed of the process, producing the explosions too rapidly.

“The process has been called ‘delayed explosion mechanism’ since the 1980s, but what we are finding is that the explosion is more delayed than we previously thought,” he said. “Back then, the delay was 50 milliseconds, now it’s 300 milliseconds. So we think—and it seems to be borne out by the 3D model we’ve run at ORNL and compared to 2D models at NERSC—that this is a general trend, that the better the physics, the slower the explosions are to develop.”

This finding has a number of practical issues, he added. For example, the size of the neutron star that gets left behind is dependent upon how soon the explosion goes off because once the explosion pushes away the outer parts of the star, the growth of the neutron star stops. It also has an effect on what layers of the star get ejected versus becoming trapped within the neutron star.

Being able to compare the 2D and 3D models has been critical to enabling Hix and his collaborators to more effectively and efficiently identify trends in observable quantities like the explosion energy, Hix emphasized.

“Once we have handful of 3D models, we will know a lot more about what the 2D models are telling us, such as which things you can take from a 2D model that are good and which are wrong,” he said. “Because the results of the models are close to the observed values like the explosion energy and mass of radioactive nickel expelled, we feel we are making progress, that these models have some semblance of reality.”

Moving forward, Hix and his colleagues are looking forward to the next generation of supercomputers, which should allow them to drill down even further into the inner workings of core-collapse supernovae and create more usable 3D models in much less time.

“In the next decade, we will see three-dimensional models that finally include all of the essential physics that our current models suggest is necessary to understand core-collapse supernovae,” they concluded in the AIP Advances paper.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube