High-performing hybrid

Berkeley Lab prepares for quantum-classical computing future

June 1, 2016

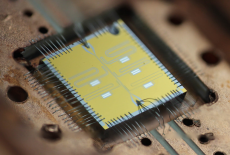

The multi-qubit chip has five superconducting transmon qubits and associated readout resonators. When cooled to absolute zero, such a device can compute things like quantum simulations of advanced materials. Qubit chip image courtesy of the Quantum Nanoelectronics Laboratory, Lawrence Berkeley National Laboratory.

Jarrod McClean and his Lawrence Berkeley National Laboratory colleagues want to simulate and predict the chemistry and properties of advanced compounds before scientists go into the lab to make them.

But they have a problem. Today’s high-performance computing (HPC) systems aren’t powerful enough to model materials with the detail and accuracy the researchers need: at the level of electron arrangements and interactions in molecules. Today’s classical computers with memory comprised of bits could take years to perform such calculations, even with the most powerful machines available.

Meanwhile, sufficiently powerful quantum computers – ones using the strange physics governing the subatomic to speed calculations – could do the job, potentially solving the problems McClean works on exponentially faster than classical HPC systems. But it will be years before large-scale quantum computers are a reality.

That frustration led McClean, a Luis Alvarez Fellow in Computing Science, and his colleagues in the lab’s Computational Chemistry, Materials and Climate Group to pursue a third course: yoking quantum processors with classical HPC systems into a hybrid computer. They’re developing algorithms – instructions, like recipes, computers follow to solve problems – that could capitalize on the combination.

“We focus on a way to take what the quantum device is best at and utilize it only for that piece and take the other pieces of the algorithm that are more mundane,” like standard math and decision-making, “and offload that to our already very powerful classical computers,” McClean says.

Each system doing what it’s best suited for “will let us reach the solution to these applications we’re interested in much sooner than if we waited for a quantum computer to be able to do all of it on its own.”

McClean described the research in a talk last fall at SC15, the international supercomputing conference in Austin, Texas.

In standard computing, data is binary, with each bit cast as either a 1 or a 0 and represented by switches or magnetic states. In comparison, quantum computing bits, or qubits, use electrons’ quantum properties to represent 1, 0 or both simultaneously, a state called superposition. Qubits also can be entangled, acting as if they’re connected although physically separated.

“You’re able to operate on this device while the bit is in that superposition of 0 and 1 to get both of the computations done” simultaneously, says McClean, a Department of Energy Computational Science Graduate Fellowship alumnus.

If researchers can harness the power of this solution superposition – a delicate task – quantum computers may be able to solve huge problems thousands of times faster than classical systems.

Part of the speedup comes from the very nature of quantum processors. For example, the quantum chemistry calculations McClean and his colleagues perform must account for the states, energy levels and interactions of electrons – a tricky task because under quantum physics electrons can be either waves or particles. Each electron also interacts with every other electron, creating a thorny many-body problem.

On a standard HPC system, such calculations are demanding and necessarily imprecise. But quantum processors use physics the problems are grounded in, making them well-suited to solve them. In other words, classical computers often struggle to simulate quantum systems, but quantum computers don’t because they’re quantum systems themselves.

McClean compares it to puppetry. In a sense, researchers program the quantum processor to behave as a controllable analog to the quantum molecular system they want to study. “Like a marionette puppeteer would make a puppet dance for them, you want (the analog quantum system) to dance” like the real system. “Then you ask your analogous system only interesting questions, like did it react or not? Does it have a lower energy? Is this the most stable configuration?”

Other researchers are studying the only commercially available quantum computer, made by D-Wave Systems. It uses quantum annealing, an approach that’s limited to solving optimization problems – although many questions can be recast to fit that type. The goal: to find a solution that maximizes or minimizes a quantity or property – essentially identifying the quickest or lowest-cost way to do something.

It’s unclear, however, whether the D-Wave actually exhibits quantum behavior or provides a definite advantage over classical computers, partly because it doesn’t meet the definition of a universal quantum computer. Other researchers, with support from the DOE Advanced Scientific Computing Research program, are using supercomputers to investigate these questions. (See sidebar, “Quantum contest.”)

The scientists McClean and his colleagues collaborate with are developing circuit-model quantum processors, devices that are analogous to classical processors. They’re believed capable of a broader range of computational tasks than quantum-annealing devices but aren’t as advanced yet.

The complexity of quantum physics leads to a virtually limitless combination of interactions to calculate and explore. A classical HPC system would find the task impossible, but it’s believed a big enough quantum processor, with the ability to represent multiple states in a single qubit, could operate on all possible inputs simultaneously to find a solution.

That strength, however, also is an obstacle to creating hybrid classical-quantum algorithms. A quantum processor can tackle a large set of potential solutions, but “at the end we can only sample out small amounts of information from that because we need to convert it back to the classical information we know how to understand,” McClean says.

The algorithms he and his colleagues are developing first use classical computing to define the problem. The quantum processor next prepares an analog to the system the researchers are studying, such as a molecule. The algorithm probes the structure to gather classical information about properties the researchers are studying.

The classical computer uses that information to determine how close the algorithm is to finding the objective function – a value, such as the molecule’s energy state, that researchers want to minimize or maximize. Based on that determination, the classical computer updates inputs governing the analog system. The quantum processor probes the updated system again, gathering new information to feed back to the classical processor.

The algorithm undergoes multiple iterations until it converges on an answer. That interplay is the biggest programming challenge in wedding quantum and classical processors.

To test their hybrid algorithms, McClean and his colleagues work with quantum computing processor researchers such as Irfan Siddiqi, whose group is based both in Berkeley Lab’s Materials Science Division and in the Quantum Nanoelectronics Laboratory at the University of California, Berkeley.

The programmers first code a simulation on a classical computer using parameters from the hardware developers. When they’re satisfied with their algorithm, it’s run on the quantum processor. “Then we check: Is the device performing as expected? Is the algorithm performing as expected, or is there some nuance that we missed because our classical computer doesn’t perfectly simulate this quantum device?”

Even with effective algorithms, however, the kinds of problems McClean and his colleagues want to tackle would require a quantum computer with 150 to 200 qubits.

That seems distant. Siddiqi’s group is developing quantum processors based on superconducting technology. The best such devices have just nine qubits. Nonetheless, that represents a big advance from just one or two qubits a few years ago. Researchers think they can soon hit 81 qubits.

A quantum processor that big once was thought nearly impossible, McClean says. “Some researchers are even promising 40 qubits by the end of the year.” That’s ambitious, “but I would be very happy if it happens.”

This story was originally published in ASCR Discovery. See more at: http://ascr-discovery.science.doe.gov/2016/06/high-performing-hybrid/#sthash.X3lsf8bN.dpuf

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube