Mobiliti: A Game Changer for Analyzing Traffic Congestion

New Software Tool Can Run Large-Scale Traffic Simulations in Seconds

February 22, 2019

Keri Troutman, khtroutman@lbl.gov, 510-486-5071

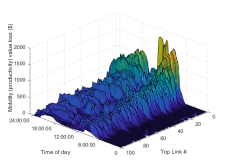

The Mobiliti tool provides the estimated value of productivity loss on the most congested areas (links) of the Bay Area over the course of a simulated day.

Urban transportation systems consume enormous resources in terms of both energy usage and productivity loss due to traffic congestion. Emerging traffic simulation technologies could help relieve the ever-increasing congestion with tools like dynamic rerouting and advanced traffic modeling. But these tools require accurate simulations of large-scale urban transportation systems, which is a challenging task—the computational demand of processing massive numbers of events and interactions between commuters requires more computational power than traditional computing solutions can provide.

To address this challenge, researchers from Lawrence Berkeley National Laboratory (Berkeley Lab) have developed a software tool that uses supercomputing resources at the National Energy Research Scientific Computing Center (NERSC) to accurately simulate the movement of the San Francisco Bay Area population through its road networks and provide estimates of the associated congestion, energy usage, and productivity loss.

With a desire to understand transportation systems more thoroughly and build energy and mobility efficient controls, researchers in government and industry are interested in developing new methods to model large-scale transportation networks. Current commercially available modeling tools can only handle pieces of the urban environment due to the high computational demands of simulating an entire metropolitan area, which leaves city planners and transportation engineers with only a partial understanding.

This roadblock prompted researchers in Berkeley Lab’s Computational Research Division (CRD) to develop a simulation tool that could go beyond what’s currently available. Their solution, Mobiliti, is a proof-of-concept, scalable transportation system simulator that implements parallel discrete event simulations on NERSC’s Cori supercomputer. Millions of nodes, links, and agents simulate the movement of the population through the Bay Area road network and provide estimates of the associated congestion, energy usage, and productivity loss, all within a minute. The researchers plan to open source Mobiliti later this year after they publish more papers about their work.

This research is a collaboration with the University of California, Berkeley's Institute for Transportation Studies, and is part of Berkeley Lab's Sustainable Transportation Initiative, managed by Berkeley Lab's Energy Technologies Area. This lab-wide, multi-institution effort is funded by the Department of Energy's Vehicle Technologies Office and is part of a major DOE initiative to use supercomputers to improve the energy efficiency of transportation, led by Jane Macfarlane, who also directs the Smart Cities Research Center at UC Berkeley. Bin Wang, another major contributor to Mobiliti, is a postdoctoral researcher at Berkeley Lab focusing on data and transportation systems.

Mobiliti simulates estimated vehicle flow rates across the Bay Area on a typical weekday morning.

“We’ve been talking with folks in government—transportation officials in San Francisco and San Jose counties—to see what types of questions they’d like answered with this technology,” said Cy Chan, a CRD computer research scientist who is the computational science lead on the Mobiliti project. “We’re helping them evaluate current and future trends to see the impacts.”

Modeling Traffic Flow and Congestion Dynamics

Mobiliti’s parallel discrete event simulation defines agents in the Bay Area’s transportation system that interact with one another by sending events, each tagged with a simulation time at which the event occurs—the simulator then processes events in order of increasing time stamp to create an accurate simulation of events. Mobiliti uses this simulation technique to model vehicle traffic congestion with road network links as the agents and vehicles as events. The link agent is responsible for determining how long it takes for each vehicle to pass through it, using information such as vehicle flow rate and downstream link blockage. Using this information, the link agent can calculate the time each vehicle departs and send an event to the agent responsible for the next link on the vehicle's route with the correct arrival time stamp.

“Mobiliti processes about four billion events in a simulation day, which might not sound like a lot, but for every event we’re processing a vehicle’s congestion pattern and determining how it contributes to a particular link, so we have to capture how it impacts all the other vehicles trying to access that link at the same time,” said Chan. “That’s the main challenge of parallelizing a discrete event simulation—you have to preserve the causality of the simulation. Optimistic parallel discrete event simulation allows us to achieve that and also reduce the amount of synchronization overhead compared to conservative approaches.”

The Mobiliti tool is actually a layered software stack. Mobiliti is the top application layer, and it implements the domain-specific aspects of the simulation, such as the traffic congestion model. Underneath that is Devastator, which is a CRD-developed general-purpose parallel discrete event runtime utilizing the Time Warp protocol developed by David Jefferson in 1982. The layer below Devastator is GASNet-Ex, which is another CRD-developed library that provides high-performance communications primitives. Mobiliti can process 3.9 billion link traversal events in less than 30 seconds using 16,384 cores on NERSC’s Cori system.

The impact of dynamic rerouting on total system-wide trip durations as the penetration of rerouting capabilities varies.

By simulating a full day's worth of traffic demand on the road network (21.6 million trip legs), Mobiliti has been able to estimate the vehicle flow rates and resulting congestion on each of the 2.2 million links in the system. In recent work, the researchers applied the simulator to hypothetical scenario evaluations, such as major traffic incidents and dynamic traffic rerouting apps. Mobiliti has also been able to estimate the impact of dynamic rerouting on total daily fuel consumption and productivity loss, computing the amount of extra fuel consumed and the extra time spent due to congestion for the modeled vehicles.

Many existing traffic simulation tools use a technique that’s quite different from Mobiliti—“traffic assignment”—where, given a particular demand profile, the program tries to optimize the routing that would be required to get to “user equilibrium”—a state in which no individual can change their route or decisions to optimize their time spent on the road.

“We are more interested in simulating the emergent behavior of each individual vehicle as it flows through the road network in response to unexpected events (such as a major highway incident or a natural disaster), so that we’re able to look at things like the impact of dynamic vehicle rerouting at the scale of metropolitan areas,” said Chan. “The other software that’s out there right now can’t do that. Our main contribution is that we can do this type of emergent behavior simulation on a much larger scale in a much shorter execution time frame than previous work.”

NERSC is a DOE Office of Science User Facility.

Mobiliti was developed in cooperation with the UC Berkeley Institute of Transportation Studies. Mobiliti is sponsored by the U.S. Department of Energy (DOE) Vehicle Technologies Office (VTO) under the Big Data Solutions for Mobility Program, an initiative of the Energy Efficient Mobility Systems (EEMS) Program. The following DOE Office of Energy Efficiency and Renewable Energy (EERE) managers played important roles in establishing the project concept, advancing implementation, and providing ongoing guidance: David Anderson and Prasad Gupte.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube