One new foundation model developed by researchers at the National Energy Research Scientific Computing Center (NERSC) and collaborators represents major progress in the establishment of these models. OmniLearned, a new iteration of a previously published model known as OmniLearn, is both state-of-the-art in its original discipline of particle physics and the first foundation model known to also show significant promise in other scientific disciplines. A paper announcing the new work and its impact for high-energy physics was published in Physical Review D.

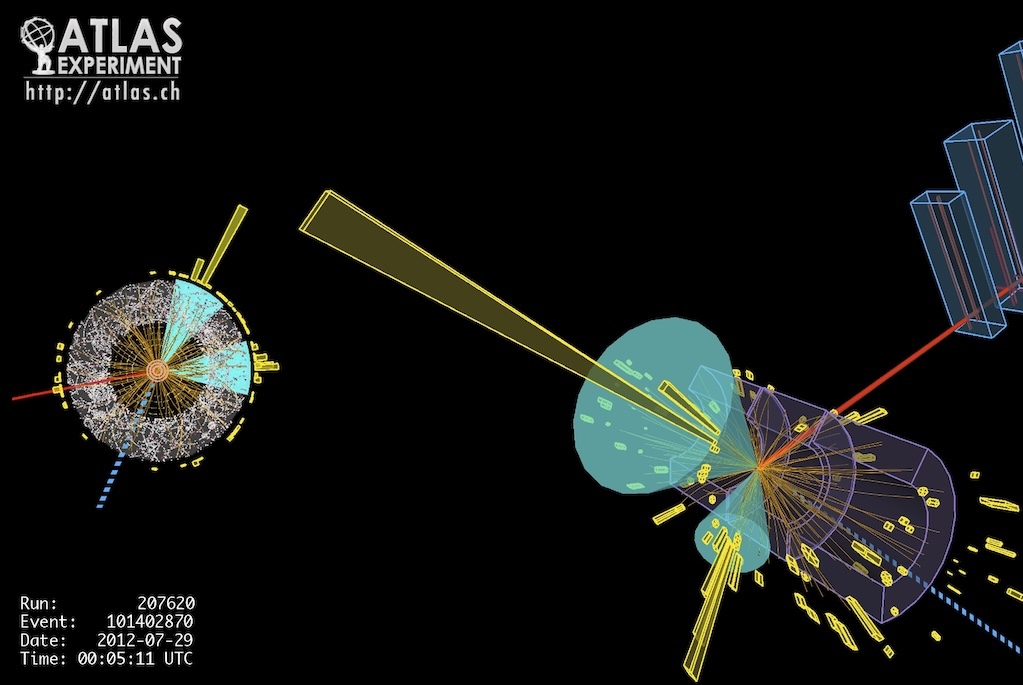

Both the original OmniLearn model and the new iteration, OmniLearned, were developed to study hadronic jets, which form when quarks and gluons produced in particle collisions combine to form a narrow cone-shaped stream of particles. These jets are commonly found and studied at facilities like the Large Hadron Collider (LHC). An understanding of how they form and behave can inform researchers about the physical processes underpinning these particle collisions and offer insights into the fundamental forces known as the strong and weak forces. This is key knowledge for particle physics and our understanding of the universe more generally.

Iterating for improvement

The 2024 OmniLearn model, which was trained on NERSC’s Perlmutter supercomputer, represented a major leap forward for particle physics: proof that machine learning, and in particular foundation models, could provide the accuracy and precision needed for the data-intensive tasks common in the field. Further, OmniLearn’s relatively compact size – small enough to run on a single GPU – made it broadly accessible and usable for researchers. The new model, OmniLearned, builds on those successes, improving performance and demonstrating its usefulness in other branches of science as well.

“With OmniLearn, we were able to show that a single model trained on inexpensive simulations could lead to large improvements with high-fidelity simulations across experiments and even across collision systems,” said Ben Nachman, a researcher at the Stanford Linear Accelerator (SLAC) and an author on the paper. “I’m also excited that OmniLearn is able to facilitate anomaly detection at the level of individual particles, a feat that was previously impossible.”

To test the effectiveness of the OmniLearned model for particle physics applications, the researchers used a set of representative tasks and found that it improved upon the state of the art for each. Several changes to the original OmniLearn model boosted the newer iteration’s performance.

Moving the model from a patchwork of software products to a single common foundation – PyTorch – made it more accessible for users and took advantage of technology enhancements made between the development of OmniLearn and OmniLearned. The researchers also shifted from using graph neural networks to using a transformer model, which attends to pairs of particles in a similar way but scales more efficiently. Because of this efficiency, the researchers were able to scale their simulations to half a billion parameters.

OmniLearned also has more physics-inspired knowledge built into its architecture than its predecessor did. This allows the model to take into account existing knowledge, such as the masses of particular particles, as it performs intensive calculations. “There are some physics-inspired quantities that are very hard for neural networks to learn by themselves,” said Vinicius Mikuni, an author on the paper, previously of NERSC and now located at the Kobayashi-Maskawa Institute at Nagoya University. Baking that domain-specific knowledge in from the beginning makes for a more powerful and flexible model.

And the OmniLearned model was trained using the data from the formation of over one billion hadronic jets – a record number, and ten times the size of the training data set used for the first OmniLearn model. A training data set of this size was possible in part because particle physics produces an exceptional amount of data compared to other domains. The LHC, for example, measures and records the data for a particle collision every 25 nanoseconds – millions of collisions per second. The team used data from the LHC and other sources, including the HERA particle accelerator. This massive and diverse training data set was crucial to making OmniLearned better than the state of the art, according to Wahid Bhimji, another author on the paper. “The current limiting factor in these models is limited training data rather than limited compute power or the computational expense of the models,” he said.

Sharing the wealth

Now that they’ve built and trained OmniLearned, the research team behind it has gone a step further in amplifying the model’s impact: they’ve made everything public, from the model itself to the datasets they used to train it to the documentation that will allow other researchers to use it for their own work. According to Mikuni, it was important to the team to present their massive training data set publicly, making it available and easy to use even for scientists not well-versed in the norms of storing particle-physics data. They’re storing the data at NERSC, a public resource for researchers.

Crucially, one feature of the OmniLearned model is that researchers in domains other than particle physics can upload their own discipline-specific data sets, making it better-informed overall and more useful for work in their fields.

“People within those fields have the knowledge about their own datasets, and they can replace everything,” he said. “If they have different types of interactions between particles, they can use their own type of interactions without having to use ours, which are more related to particle physics. And that can be not only easily replaceable, but also the pre-training models that we offer with the pre-trained weights that we already trained are still transferable. They don’t have to do anything, they can still benefit from the pre-trained model.”

Foundations across disciplines

This modular format has proven successful: In addition to its utility in particle physics, the OmniLearned model is also the first foundation model to be used successfully in other scientific disciplines, notably cosmology and molecular physics. This shows the promise of a robust foundation model to help illuminate connections between domains.

“There has never been a model that was first pre-trained to one particular field of science and then shown any benefit for a second field of science,” said Mikuni. “This basically means that using some prior knowledge from particle physics can be useful to understand physics in a different context, like in cosmology.”

How is it possible for a model meant for one type of physics to be of so much use for explaining phenomena in other domains that behave in completely different ways? Mikuni says the underlying mechanism isn’t well understood, but that part of it has to do with everything a model must learn to do even the most basic tasks. Even if the data a model uses is different in nature, the underpinnings are often the same.

“If you give an image to a neural network, not only does the neural network need to learn that it’s an image of a dog, but it needs to learn what is an image in the first place, which to us is very trivial, but the model has no knowledge whatsoever. There’s a huge amount of learning it needs to do before it can figure out what is in the image,” said Mikuni. “This representation is very general, so having a model that can use a lot of data, which we do have from particle collisions, just understanding the structure can already give you some good head start toward areas of science where simulations can be very expensive.”

Understanding how to build and train a model of the size and utility of OmniLearned shows promise for foundation models going forward, but for the moment, Mikuni and his team are enjoying watching the success of OmniLearned across the scientific landscape, he says.

“It’s very cool to see that it also works for other things that are not only particle physics, which makes it more exciting, and for the future, when we plan to have even bigger data sets that not only contain things from particle physics, but also from chemistry, from material science, from astrophysics and cosmology.”

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab's Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.