In an ever-changing technological landscape, quantum computing is emerging as a promising solution to address the limitations and overcome the scaling issues of classical computing. Quantum computing platforms will, in some instances, have the ability to solve problems more efficiently than traditional computers, potentially surpassing the capabilities of current exascale-class platforms, especially as Moore’s Law scaling diminishes.

Despite all the advances in the era of noisy intermediate-scale quantum (NISQ) devices, there remains a need for basic research to gain a better understanding of the capabilities and applicability of quantum information science and technology (QIST). Although contemporary quantum computing hardware platforms are constrained in accuracy and scale, the field of quantum computing is rapidly advancing in terms of hardware capabilities, software environments for algorithm development, and educational programs.

In scientific exploration, visualization allows researchers to explore the unknown and “see the unseeable,” effectively transferring abstract information into easily understandable images. Lawrence Berkeley National Laboratory (Berkeley Lab) researchers from the Scientific Data Division, the Applied Mathematics & Computational Research Division, and the National Energy Research Scientific Computing Center (NERSC), in collaboration with teams from San Francisco State University (SFSU) and Case Western Reserve University, recently released two papers introducing new methods of data storage and analysis to make quantum computing more practical and exploring how visualization helps in understanding quantum computing.

“This work represents significant strides in understanding and harnessing current quantum devices for data encoding, processing, and visualization. These contributions build on our previous efforts to highlight the ongoing exploration and potential of quantum technologies in shaping scientific data analysis and visualization,” explained Talita Perciano, a Research Scientist in the Scientific Data Division and the leader of this effort. “The realization of these projects underscores the vital role of teamwork, as each member brought their unique expertise and perspective. This collaboration is a testament to the fact that in the quantum realm, as in many aspects of life, progress is not just about individual achievements, but about the team’s collective effort and shared vision.”

With the recent call to build and educate a quantum workforce, many organizations, including the U.S. Department of Energy (DOE), are looking for ways to help advance research and develop new algorithms, systems, and software environments for QIST. To that end, Berkeley Lab’s ongoing collaboration with SFSU, a minority-serving institution, leverages the Lab’s efforts in QIST and expands SFSU’s existing curricula to include new QIST-focused coursework and training opportunities. Formerly a Berkeley Lab Senior Computer Scientist, SFSU Associate Professor Wes Bethel led the charge toward producing a new generation of graduating SFSU Computing Science Master’s students, many from underrepresented groups, with theses focusing on QIST topics.

Mercy Amankwah, a Ph.D. student at Case Western University, has been part of this collaboration since June 2021, dedicating 12 weeks of her summer breaks annually to participate in the Sustainable Research Pathways program, a partnership between Berkeley Lab and the Sustainable Horizons Institute. Amankwah leveraged her expertise in linear algebra to innovate the design and manipulation of quantum circuits to achieve the efficiency the team hoped for in two new methods, QCrank and ABArt. The methods use the team’s innovative techniques to encode data for quantum computers. “The work we’re doing is truly captivating,” said Amankwah. “It’s a journey that constantly pushes us to contemplate the next big breakthroughs. I’m excitedly looking forward to making more impactful contributions to this field as I step into my post-Ph.D. career adventure.”

Balancing Classical and Quantum Capabilities

The team’s focus on encoding classical data for use by quantum algorithms is a stepping stone toward progress in leveraging QIST methods as part of graphics and visualization, both of which are historically computationally expensive. “Finding the right balance between the capabilities of QIST and classical computing is a big research challenge. On the one side, quantum systems can handle exponentially larger problems as we add more qubits. On the other side, classical systems and HPC platforms have decades of solid research and infrastructure, but they hit technological limits in scaling,” said Bethel. “One likely pathway is the idea of hybrid classical-quantum computing, blending classical CPUs with quantum processing units (QPUs). This approach combines the best of both worlds, offering exciting possibilities for specific science applications.”

The first paper, recently published in Nature Scientific Reports, explores how to encode and store classical data in quantum systems to improve analytic capabilities and covers the two new methods and how they function. QCrank works by encoding sets of real numbers into continuous rotations of selected qubits, allowing the representation of more data using less space. QBArt, on the other hand, directly represents binary data as a series of zeros and ones mapped to pure zero and one qubit states, making it easier to do calculations on the data.

In the second paper, the team delved into the interaction between visualization and quantum computing, showing how visualization has contributed to quantum computing by enabling the representation of complex quantum states graphically and exploring the potential benefits and challenges of integrating quantum computing into the realm of visual data exploration and analysis.

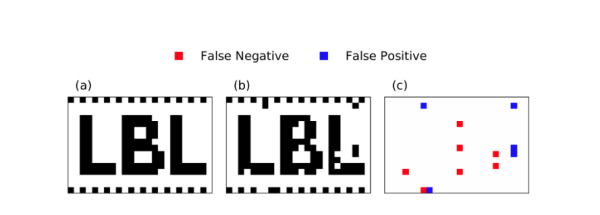

The team tested their methods on NISQ quantum hardware using several types of data-processing tasks, such as matching patterns in DNA, calculating the distance between sequences of integers, manipulating a sequence of complex numbers, and writing and retrieving images made of binary pixels. The team ran these tests using a quantum processor called Quantinuum H1-1, as well as on other quantum processors available through IBMQ and IonQ. Often, quantum algorithms processing such large data samples as a single circuit on NISQ devices perform very poorly or yield completely random output. The authors demonstrated that their new methods obtained remarkably accurate results when using such hardware.

Dealing with Data Encoding and Crosstalk

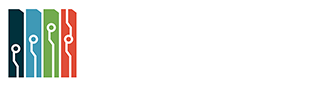

Figure 1. Demonstration of recovery of a black and white 384 pixels image using QCrank executed on the Quantinuum H1-1 real QPU. a) ground-truth image, b) recovered image has 97% of correct pixels, c) residual showing the locations of 12 incorrect pixels.

“The focus was on balancing the current quantum hardware constraints. Some mathematically solid encoding methods use so many steps, or quantum gates, that the quantum system loses the initial information before even reaching the final gate. This leaves no opportunity to correctly compute the encoded data,” said Jan Balewski, Consultant at NERSC and first author of the Scientific Reports paper. “To address this, we came up with the scheme of breaking one long sequence into many parallel encoding streams.”

Unfortunately, this method led to a new problem, crosstalk among streams, which distorted the stored information. “It’s like trying to listen to multiple conversations in a crowded room; when they overlap, understanding each message becomes challenging. In data systems, crosstalk distorts information, making insights less accurate,” said Balewski. “We tackled the crosstalk in two ways: for QCrank, we introduced a calibration step; for QBArt, we simplified the language used in the messages. Reducing the number of used tokens is like switching from the Latin alphabet to Morse code – slower to send but less affected by distortions.”

This research introduces two significant advancements, making quantum data encoding and analysis more practical. First, parallel uniformly controlled rotation (pUCR) circuits drastically reduce the complexity of quantum circuits compared to previous methods. These circuits allow for multiple operations to occur simultaneously, making them well-suited for quantum processors, such as the H1-1 device from Quantinuum, with high connectivity and support for parallel gate execution. Second, the study introduces QCrank and QBArt, the two data encoding techniques that utilize pUCR circuits: QCrank encodes continuous real data as rotation angles and QBArt encodes integer data in binary form. The research also presents a series of experiments conducted using IonQ and IBMQ quantum processors, demonstrating successful quantum data encoding and analysis on a larger scale than previously achieved. These experiments also incorporate new error mitigation strategies to correct noisy hardware results, enhancing the reliability of the computations.

The experiments conducted with QCrank show promising results, successfully encoding and retrieving 384 black-and-white pixels on 12 qubits with a high level of accuracy in recovering the information (Figure 1). Notably, this image represents the largest image ever successfully encoded on a quantum device, marking it a groundbreaking achievement. Storing that same image on a classical computer would require 384 bits, making it 30 times less efficient compared to a quantum computer. Since the capacity of the quantum system grows exponentially with the number of qubits, just 35 qubits on an ideal quantum computer could, for example, hold the entire 150 gigabytes of DNA information found in the human genome.

Figure 2. Results obtained by the DNA sequence matching executed on Quantinuum H1-1 QPU. The algorithm correctly detects the differences between the 6 codons in positions 5 to 10, marked in red.

“Navigating the forefront of quantum computing, our team, energized by emerging talents, is exploring theoretical advances leveraging our data encoding methods to tackle a wide range of analysis tasks. These novel approaches hold the promise of unlocking analytical capabilities on a scale we haven’t seen before with NISQ devices,” said Perciano. “Leveraging both HPC and quantum hardware, we aim to expand the horizons of quantum computing research, envisioning how quantum can revolutionize problem-solving methods across various scientific domains. As quantum hardware evolves, all of us on the research team believe in its potential for practicality and usefulness as a powerful tool for large-scale scientific data analysis and visualization.”

This research was supported by the U.S. Department of Energy (DOE) Office of Advanced Scientific Computing Research (ASCR) Exploratory Research for Extreme-Scale Science, the Sustainable Horizons Institute, and Berkeley Lab’s Lab Directed Research and Development Program and used computing resources at NERSC and the Oak Ridge Leadership Computing Facility.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab's Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.