New Computer Model Pinpoints Prime Materials for Carbon Capture

July 17, 2012

NERSC Contact: Linda Vu, lvu@lbl.gov, +1 510 495 2402

UC Berkeley Contact: Robert Sanders, rsanders@berkeley.edu

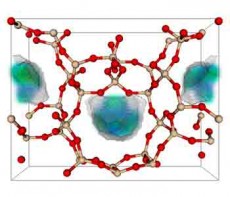

One of the 50 best zeolite structures for capturing carbon dioxide. Zeolite is a porous solid made of silicon dioxide, or quartz. In the model, the red balls are oxygen, the tan balls are silicon. The blue-green area is where carbon dioxide prefers to nestle when it adsorbs. (Berend Smit laboratory, UC Berkeley)

Approximately 45 percent of electricity used in the United States is produced by coal-burning power plants that spew carbon dioxide (CO2) into the atmosphere and contribute to global warming. While humans could potentially mitigate this effect by capturing CO2 from power plant flue gas before it reaches the atmosphere, experts note that doing this with current technologies would substantially drive up the cost of electricity. Dubbed “energy parasites,” these methods use about one-third of the total energy generated by the plant.

But less energy expensive technologies are on the horizon, say researchers at the University of California, Berkeley. Using resources at the Department of Energy’s National Energy Research Scientific Computing Center (NERSC), the team developed a new computer model that suggests solid porous materials like zeolites and metal oxide frameworks (MOFs) could be more efficient for capturing CO2 from plant emissions.

“The current on-the-shelf process of carbon capture has problems, including environmental ones, if you do it on a large scale,” said Berend Smit, a professor at UC Berkeley and senior scientist in the Lawrence Berkeley National Laboratory’s (Berkeley Lab) Materials Sciences Division. “Our calculations show that we can reduce the parasitic energy costs of carbon capture by 30 percent with porous materials like MOFs and zeolites, which should encourage the industry and academics to look at them.”

Smit and his colleagues at UC Berkeley, Berkeley Lab, Rice University and the Electric Power Research Institute (EPRI) in Palo Alto, Calif., who recently had their paper published inNature Materials, are already integrating their database of materials into power plant design software.

“Our database of carbon capture materials is going to be coupled to a model of a full plant design, so if we have a new material, we can immediately see whether this material makes sense for an actual design,” said Smit.

Guiding new materials research

Coal-fired power plant.

Because porous materials effectively can grab CO2 from flue gas emissions, and release it at lower temperatures, this process is inherently more energy efficient than amine scrubbing. But there are potentially millions of porous materials that can capture CO2. These materials differ significantly in how tightly they grab CO2 and how easily they release it. Smit notes that ideal carbon-capture materials are a balance of these two characteristics. But until the new computer model, it was physically and economically impossible to synthesize and test all of these structures to find the ideal materials.

“What is unique about this model is that, for the first time, we are able to guide the direction for materials research and say, ‘here are the properties we want, even if we don’t know what the ultimate material will look like,’” said Abhoyjit Bhown, a co-author of the study and a technical executive at EPRI, which conducts research and development for the electric power industry and the public.

Smit and his team worked with Bhown and EPRI scientists to establish the best criteria for a good carbon capture material, focusing on the energy costs of capture, release and compression, and then developed a computer model to calculate this energy consumption for any material. Smit then obtained a database of 4 million zeolite structures compiled by Rice University scientists and ran the structures through his model.

The team also computed the energy efficiency of 10,000 MOF structures, which are composites of metals like iron with organic compounds that, together, form a porous structure. That structure has been touted as a way to store hydrogen for fuel or to separate gases during petroleum refining.

“The surprise was that we found many materials, some already known but others hypothetical, that could be synthesized and work more energy efficiently than amines,” said Smit. “This best part about this model is that it will work for structures other than zeolites and MOFs.”

By incorporating this model into a Carbon Capture Materials Database, the team is enabling any researcher in the world to upload the structure of a proposed material to this site calculate whether it offers improved performance over the energy consumption figures of today’s best technology for removing carbon.

Bhown notes that theoretically, the best material for capturing carbon will probably have a parasitic energy cost of about 10 percent, so processes that use 20 percent or less are more attractive.

GPUs dramatically speed calculations

According to Smit, graphics processing units (GPUs) and the Berkeley Lab’s Computational Science and Engineering Petascale Initiative were key to the team’s success. By using GPUs, instead of standard computer central processing units (CPUs), the team reduced each structure’s calculation, which involves complex quantum chemistry, from 10 days to 2 seconds. Smit credits Jihan Kim, formerly a Petascale Initiative postdoctoral researcher, with helping the team use NERSC’s GPU testbed—DIRAC.

“Before we started working with Jihan, it took about 10 days to calculate the properties of one material on one CPU, with his help we were able to run the same calculations on one GPU in a few seconds,” said Smit. “If it takes 10 days to calculate the property of one material, then you have to carefully consider which systems you want to look at; but if you can do the exact same thing in 10 seconds, you can look at them all.”

Berkeley Lab’s Petascale Initiative began in 2009 with initial stimulus funding from the American Recovery Reinvestment Act. This funding allows NERSC to hire roughly nine post-doctoral researchers for approximate two-year terms to help design algorithms and programming models, and enhance code performance in key energy research areas such as energy technologies, fusion, and combustion. These algorithms and code improvements are targeted at current and future high-end architectures including multicore and GPU-accelerated designs.

After a brief conversation with Smit several years ago, NERSC’s Alice Koniges, who heads the Petascale Initiative, introduced him to Kim. Smit found the collaboration so useful that when the stimulus funding for Kim’s two-year term ended, he contributed funds from his DOE Energy Frontier Research Center (EFRC) to keep the collaboration going. Smit is the director of the EFRC for Gas Separation Relevant to Clean Energy Technologies at UC Berkeley.

“This collaboration made an enormous difference,” said Smit. “Before, we tried to rationalize the best structure for carbon capture, but we really didn’t succeed. It was only when were able to calculate everything, that we discovered the common characteristics that made a material successful, and we wouldn’t have done that without this partnership with NERSC.”

“The hope is that there is a system set up such that, when someone comes up with a promising material, we can rapidly test it and get it to a readiness level pretty quickly,” Bhown said. “We are all excited by this work and look forward to pursuing it further.”

In addition to calculating the chemical characteristics, the Carbon Capture Materials Database also provides geometrical parameters describing their crystalline structure and pore space. For more information on this work read Carbon Dioxide Catchers.

This story was adapted from a press release published by UC Berkeley.

In addition to Bhown, Kim and Smit, other coauthors of the study are graduate students Li-Chiang Lin and Joseph A. Swisher of UC Berkeley; Adam H. Berger of the EPRI; Richard L. Martin, Chris H. Rycroft and Maciej Haranczyk of Berkeley Lab’s Computational Research Division; post-doctoral fellow Kuldeep Jariwala of Berkeley Lab’s Materials Science Division; and Michael W. Deem of the Departments of Bioengineering and Physics and Astronomy at Rice University.

This work has been supported by the Department of Energy through National Energy Technology Laboratory, Advanced Research Projects Agency—Energy (ARPA-E) and Office of Science, and through EPRI’s Office of Technology Innovation, and the Advanced Scientific Computing Research (ASCR) Program Office.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube