Simulations Reveal An Unusual Death for Ancient Stars

Findings made possible with NERSC resources and Berkeley Lab Code

September 29, 2014

Contact: Linda Vu, +1 510 495 2402, lvu@lbl.gov

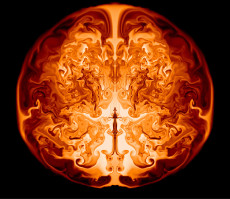

This image is a slice through the interior of a supermassive star of 55,500 solar masses along the axis of symmetry. It shows the inner helium core in which nuclear burning is converting helium to oxygen, powering various fluid instabilities (swirling lines). This "snapshot" from a CASTRO simulation shows one moment a day after the onset of the explosion, when the radius of the outer circle would be slightly larger than that of the orbit of the Earth around the sun. Visualizations were done in VisIT. (Image Credit: Ken Chen, UCSC)

Certain primordial stars—those between 55,000 and 56,000 times the mass of our Sun, or solar masses—may have died unusually. In death, these objects—among the Universe’s first-generation of stars—would have exploded as supernovae and burned completely, leaving no remnant black hole behind.

Astrophysicists at the University of California, Santa Cruz (UCSC) and the University of Minnesota came to this conclusion after running a number of supercomputer simulations at the Department of Energy’s (DOE's) National Energy Research Scientific Computing Center (NERSC) and Minnesota Supercomputing Institute at the University of Minnesota. They relied extensively on CASTRO, a compressible astrophysics code developed at DOE's Lawrence Berkeley National Laboratory’s (Berkeley Lab’s) Computational Research Division (CRD). Their findings were recently published in Astrophysical Journal (ApJ).

First-generation stars are especially interesting because they produced the first heavy elements, or chemical elements other than hydrogen and helium. In death, they sent their chemical creations into outer space, paving the way for subsequent generations of stars, solar systems and galaxies. With a greater understanding of how these first stars died, scientists hope to glean some insights about how the Universe, as we know it today, came to be.

“We found that there is a narrow window where supermassive stars could explode completely instead of becoming a supermassive black hole—no one has ever found this mechanism before,” says Ke-Jung Chen, a postdoctoral researcher at UCSC and lead author of the ApJ paper. “Without NERSC resources, it would have taken us a lot longer to reach this result. From a user perspective, the facility is run very efficiently and it is an extremely convenient place to do science.”

The Simulations: What’s Going On?

To model the life of a primordial supermassive star, Chen and his colleagues used a one-dimensional stellar evolution code called KEPLER. This code takes into account key processes like nuclear burning and stellar convection. And relevant for massive stars, photo-disintegration of elements, electron-positron pair production and special relativistic effects. The team also included general relativistic effects, which are important for stars above 1,000 solar masses.

They found that primordial stars between 55,000 to 56,000 solar masses live about 1.69 million years before becoming unstable due to general relativistic effects and then start to collapse. As the star collapses, it begins to rapidly synthesize heavy elements like oxygen, neon, magnesium and silicon starting with helium in its core. This process releases more energy than the binding energy of the star, halting the collapse and causing a massive explosion: a supernova.

To model the death mechanisms of these stars, Chen and his colleagues used CASTRO—a multidimensional compressible astrophysics code developed at Berkeley Lab by scientists Ann Almgren and John Bell. These simulations show that once collapse is reversed, Rayleigh-Taylor instabilities mix heavy elements produced in the star’s final moments throughout the star itself. The researchers say that this mixing should create a distinct observational signature that could be detected by upcoming near-infrared experiments such as the European Space Agency’s Euclid and NASA’s Wide-Field Infrared Survey Telescope.

Depending on the intensity of the supernovae, some supermassive stars could, when they explode, enrich their entire host galaxy and even some nearby galaxies with elements ranging from carbon to silicon. In some cases, supernova may even trigger a burst of star formation in its host galaxy, which would make it visually distinct from other young galaxies.

“My work involves studying the supernovae of very massive stars with new physical processes beyond hydrodynamics, so I’ve collaborated with Ann Almgren to adapt CASTRO for many different projects over the years,” says Chen. “Before I run my simulations, I typically think about the physics I need to solve a particular problem. I then work with Ann to develop some code and incorporate it into CASTRO. It is a very efficient system.”

To visualize his data, Chen used an open source tool called VisIt, which was architected by Hank Childs, formerly a staff scientist at Berkeley Lab. “Most of the time I did my own visualizations, but when there were things that I needed to modify or customize I would shoot Hank an email and that was very helpful.”

Chen completed much of this work while he was a graduate student at the University of Minnesota. He completed his Ph.D. in physics in 2013.

For more information:

http://astrobites.org/2014/03/21/a-new-way-to-die-what-happens-to-supermassive-stars/

http://iopscience.iop.org/0004-637X/790/2/162

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube