SHARP: A "Killer App" for Ptychography

August 10, 2016

This story was originally published by the Advanced Light Source (ALS).

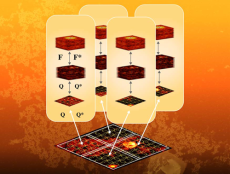

In ptychography, the x-ray beam scans through the sample, creating diffraction data (a frame) for each point. SHARP distributes the large dataset and workload onto many parallel GPU (graphics processing unit) cores to produce very high resolution images in less then a second.

Ptychography, a revolutionary x-ray imaging technique that combines diffraction and microscopy, has now been coupled with applied mathematics and high-performance computing to quickly turn high-throughput "imaging by diffraction" datasets into the sharpest three-dimensional images ever produced. A joint collaboration between the Advanced Light Source (ALS), Uppsala University, and researchers at the Lawrence Berkeley National Laboratory's (Berkeley Lab's) Center for Advanced Mathematics for Energy Research Applications (CAMERA) has led to SHARP (Scalable Heterogeneous Adaptive Real-time Ptychography), which is an algorithmic framework and computer software for the fast reconstruction of images from ptychographic data used at the ALS. With the image-processing tools provided by SHARP, every scanning microscope can now add a parallel detector, and every diffraction imaging instrument can add a scanning stage to take advantage of this groundbreaking technique.

Based in Berkeley Lab's Computational Research Division (CRD), CAMERA brings together applied mathematicians, computer scientists and experimental researchers to devise new models and algorithms for tomorrow’s scientific technologies.

SHARP Paves the Way for the Imaging Revolution

Among other innovations, SHARP uses a "relationship network" relating each frame to its neighbors to build and accelerate a better starting guess.

In a typical scanning microscope, a small beam is focused onto the sample via a lens, and the transmission is measured in a single-element detector. The image is built up by plotting the transmission as a function of the sample position as it is rastered across the beam. In such a microscope, the resolution of the image is given by the beam size. In ptychography, one replaces the single-element detector with a two-dimensional-array detector such as a CCD and measures the intensity distribution at many scattering angles.

Each recorded diffraction pattern contains information about features that are smaller than the beam size, enabling higher resolution. At short wavelengths, however, it is only possible to measure the intensity of the diffracted light. To reconstruct an image of the object, one also needs to retrieve the phase. The phase-retrieval problem is made tractable in ptychography by recording multiple diffraction patterns from the same region of the object, compensating for phaseless information with a redundant set of measurements.

The reconstruction of ptychographic data is a nonlinear problem, and until recently, there were no theoretical guarantees that popular algorithms would produce faithful representations of the specimen. Moreover, earlier algorithms did not work well on large datasets. Common iterative methods operate by interchanging information between nearest-neighbor frames (diffraction patterns) at each step, so it might take many iterations for far-apart frames to communicate.

SHARP developers approached the problem by connecting the pixels of each frame in the dataset to one another in a "relationship network" that can be represented mathematically by a high-dimensional matrix. Finding the largest eigenvector of the matrix, containing the most aligned phases, quickly results in an accurate initial guess for an alternating projection algorithm. The approach achieves accelerated convergence for large-scale phase-retrieval problems spanning multiple length scales and it can also recover experimental fluctuations over a large range of time scales, enabling nanoscale resolution over macroscopic objects.

As new instruments come online with ptycho-tomographic hyperspectral capabilities, polarization control, larger datasets, and new unexpected sources of bias, the work described here will have a lasting impact. The imaging revolution unleashed by high-throughput, high-resolution ptychography will have profound effects on our understanding of nature, whenever observing the whole picture is as important as recovering the local atomic arrangement of the components with chemical-state specificity.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube