Record-setting Seismic Simulations Run on NERSC’s Cori System

Researchers from San Diego Supercomputer Center Present Results at ISC17

June 21, 2017

By Kathy Kincade

Contact: cscomms@lbl.gov

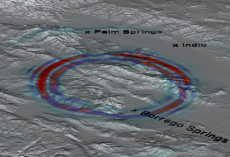

Example of hypothetical seismic wave propagation with mountain topography using the new EDGE software. Shown is the surface of the computational domain covering the San Jacinto fault zone between Anza and Borrego Springs in California. Colors denote the amplitude of the particle velocity, where warmer colors correspond to higher amplitudes. Image courtesy of Alex Breuer, SDSC.

Record-setting seismic simulations run earlier this year on the Cori supercomputer at Lawrence Berkeley National Laboratory’s National Energy Research Scientific Computing Center (NERSC) were the subject of two presentations at the ISC High Performance conference in Frankfurt, Germany this week.

One of the presentations details a new seismic software package, developed by researchers at the San Diego Supercomputer Center (SDSC) in conjunction with Intel, that enabled the fastest seismic simulation ever run to date: 10.4 petaflops/s. The largest simulation used 612,000 Intel Xeon Phi Knights Landing (KNL) processor cores on the Cori KNL system. The simulations, which mimic possible large-scale seismic activity in Southern California, were done using a new software system called EDGE (Extreme-Scale Discontinuous Galerkin Environment), a solver package for fused seismic simulations.

“In addition to using the entire Cori KNL supercomputer, our research showed a substantial gain in efficiency in using the new software,” said Alex Breuer, a postdoctoral researcher from SDSC’s High Performance Geocomputing Laboratory (HPGeoC) who presented the findings at ISC17. “Researchers will be able to run about two to almost five times the number of simulations using EDGE, saving time and reducing cost.”

This research was enabled by the NERSC Director's Reserve. The research team also helped NERSC test, debug and validate the Cori KNL system at scale soon after Cori was installed, noted Richard Gerber, head of NERSC’s high performance computing department.

A second HPGeoC paper presented at ISC17 covers a new study of the AWP-ODC software that has been used by the Southern California Earthquake Center (SCEC) for years. The software was optimized to run in large-scale for the first time on the latest generation of Intel data center processors, called Intel Xeon Phi x200.

These simulations, which also used the Cori KNL system, attained competitive performance to an equivalent simulation on the entire GPU-accelerated Titan supercomputer, located at Oak Ridge National Laboratory, and has been the resource used for the largest AWP-ODC simulations in recent years. Additionally, the software obtained high performance on Stampede-KNL at the Texas Advanced Computing Center at The University of Texas at Austin.

Both projects are part of a collaboration announced in early 2016 under which Intel opened a parallel computing center (PCC) at SDSC to focus on seismic research, including the ongoing development of computer-based simulations that can be used to better inform and assist disaster recovery and relief efforts.

Such detailed computer simulations allow researchers to study earthquake mechanisms in a virtual laboratory. “These two studies open the door for the next-generation of seismic simulations using the latest and most sophisticated software,” said Yifeng Cui, founder of the HPGeoC at SDSC, director of the Intel PCC at SDSC and PI on this research. “Going forward, we will use the new codes widely for some of the most challenging tasks at SCEC.”

Other members of the research team include Josh Tobin, a Ph.D. student in UCSD’s Department of Mathematics; Alexander Heinecke, a research scientist at Intel Labs; and Charles Yount, a principal engineer at Intel.

NERSC is a U.S. Department of Energy Office of Science User Facility.

This article used resources provided by the University of California, San Diego.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube