From Drawing Board to Community: A Decade in the Life of Nyx

Open-source code born at Berkeley Lab continues to advance cosmology research

March 22, 2021

Contact: cscomms@lbl.gov

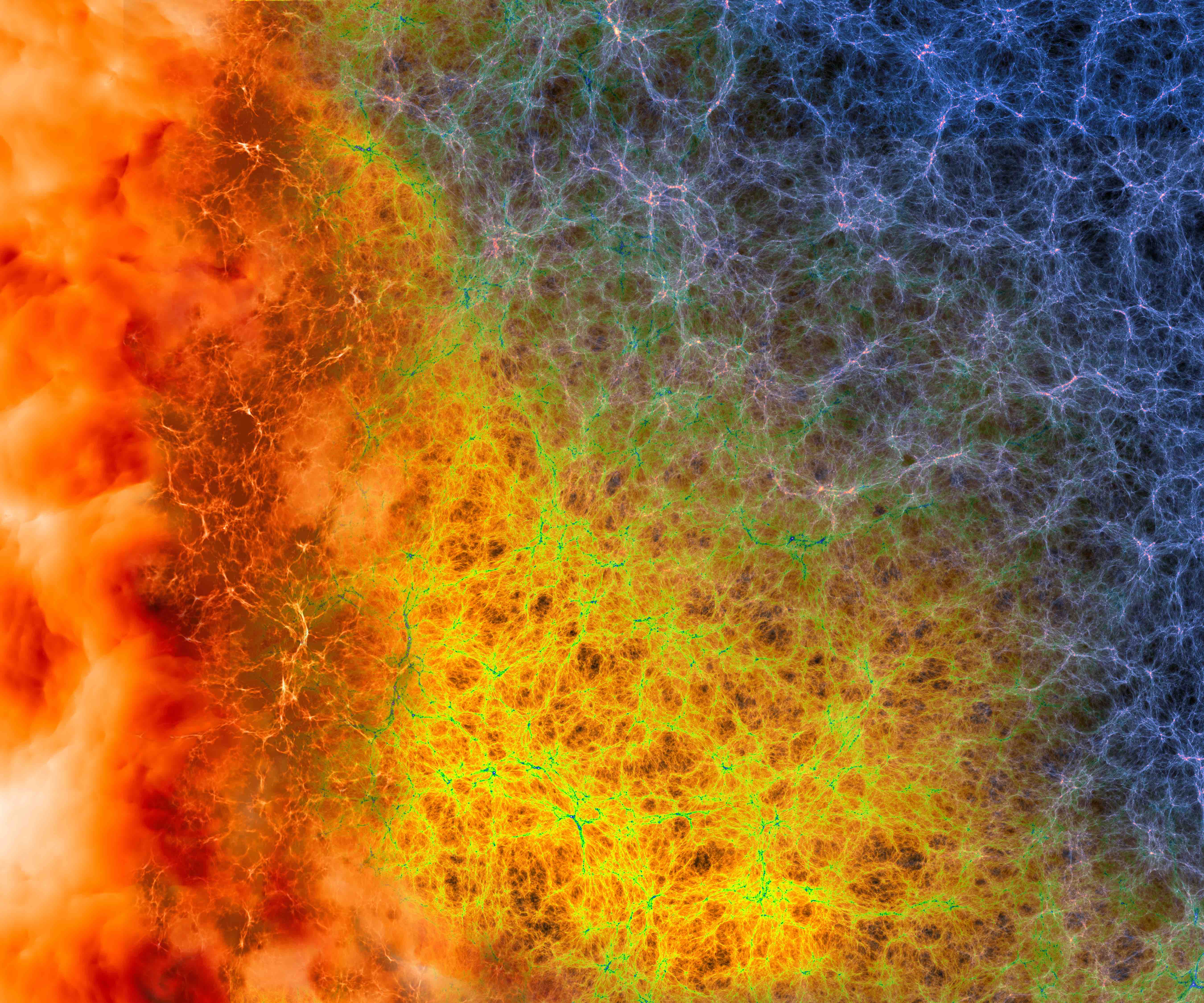

This Nyx simulation, part of the artwork that will be displayed on Berkeley Lab's newest supercomputer, reveals the cosmic web of dark matter and gas underpinning our visible universe. Select image to enlarge. (Credit: Andrew Myers, Berkeley Lab)

Over the past decade, a coding project born out of Lawrence Berkeley National Laboratory’s Computing Sciences Area has helped advance the field of cosmology and ready it for the age of exascale computing.

Nyx – an adaptive mesh, massively parallel cosmological simulation code designed to help study the universe at its grandest levels – has become an essential tool for research into some of its smallest, most detailed features as well, allowing for critical breakthroughs in the understanding of dark matter, dark energy, and the intergalactic medium.

Nyx traces its roots back to 2010, when Peter Nugent, department head for Computational Science in Berkeley Lab’s Computational Research Division (CRD), approached CRD senior scientist Ann Almgren with the prospect of adapting the Castro code, an adaptive mesh astrophysics simulation tool built on the AMReX software framework for cosmology. The pair hatched a plan to create an adaptive mesh code that would be able to represent dark matter as particles that could interact with hydrogen gas and also capture the expansion factor of the universe.

“It literally started with a conversation,” recalled Almgren, now the group lead of CRD’s Center for Computational Sciences and Engineering. “I still remember when Peter first raised the idea. The collaboration started with ‘hey, can you do this’?”

By 2011, funding from the Laboratory Directed Research and Development (LDRD) program enabled the team to start work on creating Nyx. Berkeley Lab’s LDRD program is designed to incubate emerging lab projects in their early stages, providing a bridge from concept to full-scale Department of Energy (DOE) funded projects.

Among the initial members of the Nyx team was Computational Cosmology Center research scientist Zarija Lukic, who took charge of creating the physics simulation elements of Nyx. Among other things, Lukic would help to author the 2013 paper that introduced Nyx to the scientific community and lead the code in the direction of intergalactic medium and Lyman alpha forest studies. Shortly after, Nyx transitioned from the LDRD program to the DOE’s Scientific Discovery through Advanced Computing (SciDAC) program, which links scientific application research efforts with high-performance computing (HPC) technology.

Nyx began to produce immediate results, and one of the code’s biggest advantages became clear: scalability. From its earliest days, Nyx was designed to take advantage of all types and scales of hardware on its host machine, and Nyx simulations have proved crucial in allowing cosmologists to produce models of the universe at unprecedented scales. Over time, this has allowed researchers to make the most of the supercomputers hosting it – from CPU- only systems to heterogeneous systems containing CPUs and GPUs.

“The biggest thing is our ability to scale,” said Nugent. “Because we can take advantage of the entire machine, CPU or GPU, we can occupy a very large memory footprint and do the largest of these types of simulations in terms of size of the universe at the highest resolutions.”

Exploring the Lyman-alpha Forest

One of the earliest large-scale applications for Nyx involved studies of the Lyman-alpha forest, which remains the main application area for the code. It is made up of a series of absorption lines created as the light from distant quasars located far outside the Milky Way travels billions of light years toward us, passing through the gas residing between galaxies. By examining the forest’s light spectrum and distortions caused as that light travels the vast distances to Earth, cosmologists can map the structure of the intergalactic gas to gain a better understanding of what the universe is made of, and what the universe looked like after the Big Bang. Perhaps most interestingly, the distortions in the light spectrum, as will be observed with the Dark Energy Spectroscopic Instrument (DESI) and high-resolution spectrographs like the one mounted on the Keck telescope, can provide insight into the nature of dark matter and neutrinos.

But simulations of the forest pose an immense computational challenge, as they require recreating both massive sectors of space – in some cases up to 500 million light years across – while also being able to calculate the behavior of small density fluctuations as light moves through the intergalactic medium.

Enter Nyx. Adaptive mesh refinement (AMR) allows a computer to determine for what part of the universe detailed calculations need to be performed and where more general, coarse results are accurate enough. This reduces the number of calculations and memory needed and reduces the compute time for large, complex simulations. By utilizing components of AMReX, the code is able to scale up to model the vast volumes probed by the Lyman-alpha forest.

“In 2014 and 2015 we were running simulations that are still today’s state of the art,” Lukic said.

Another key aspect of Nyx’s popularity is that it is open source, which has been key to creating a larger community for the code outside of Berkeley Lab. Today research teams from all over are finding new applications for Nyx, employing the code for smaller-scale simulations and experiments. In some cases, Nyx is used as is, and in other cases, the source code is modified by these researchers to fit their own needs.

“People have used it to do simulations of single galaxies,” Nugent said. “People have used it to do simulations of much earlier in the universe and later in the universe.”

Ready for the Next Generation

As the scientific community prepares to move into the era of exascale computing, Nyx shows no signs of letting up. Ongoing development of the code is supported by the DOE’s Exascale Computing Project, and Nyx is slated to play a key supporting role in the highly anticipated DESI experiment, performing simulations to back up DESI’s observations of the role dark matter plays in supporting the expansion of the universe.

Even with the next-generation supercomputers that will be used for DESI, the Nyx code’s ability to make the most out of the hardware will be crucial for performing accurate simulations to verify results. Postdoctoral researcher Jean Sexton has spent much of the past year making sure Nyx will continue to be on the cutting edge and ready to tackle the next round of problems.

“If you do not have good efficiency, scalability, and physical accuracy you will not be able to produce simulations needed to get an accurate representation of the data,” said Lukic. “You are not going to be able to extract the scientific conclusions from future sky surveys.”

Nyx is also slated to, quite literally, feature front and center on Berkeley’s Lab’s newest supercomputer, Perlmutter, which will be located at the National Energy Research Scientific Computing Center (NERSC). When it is unveiled this year, Perlmutter will feature artwork generated by a Nyx simulation diagramming the filaments that connect large clusters of galaxies. The Nyx code will also likely be prominent inside Perlmutter and other next-generation supercomputers, including those at the exascale.

When all is said and done, Nyx will go down as a shining example of how Berkeley Lab is able to develop a project from its infancy, through the LDRD program, into DOE funding, and finally to release for the larger scientific community. Over the span of 10 years, the Nyx code evolved from a conversation between Labs staffers to a mainstay in the field of cosmology and a key component of the next generation of high-performance computing systems and research into how the universe functions. For Almgren, who was there from the beginning, Nyx underlines one of the Lab’s greatest strengths.

“I think that is one of the things the lab does well: it allows people to make collaborations that advance science much more efficiently,” she said.

NERSC is a U.S. Department of Energy Office of Science user facility.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube