Berkeley Lab’s Networking Middleware GASNet Turns 20

Now, GASNet-EX is Gearing Up for the Exascale Era

December 5, 2022

by Linda Vu

Contact: CSComms@lbl.gov

In 2002, as massively parallel supercomputers were offering more computing power to programmers, a significant limitation to overall effectiveness was the time it took for processors to communicate with each other.

“Architectures were evolving quickly, and it was becoming clear that the dominant high-performance computing layer for communications, Message Passing Interface (MPI), was not well-suited at the time to take advantage of the Remote Memory Access (RMA) capabilities that were becoming available in network hardware,” said Dan Bonachea, Lawrence Berkeley National Laboratory (Berkeley Lab) Computer Systems Engineer.

Recognizing that RMA would become an important feature in future HPC systems, Bonachea, then a UC Berkeley graduate student, dedicated his CS258 Spring 2002 semester project to develop a solution to this problem. Building on the Active Messages (AM) paradigm developed at UC Berkeley a decade before, he designed GASNet (short for Global-Address Space Networking), a network-independent and language-independent high-performance communication interface for implementing the runtime system of global address space languages, such as UPC and Titanium.

He published the first GASNet Specification technical report in October of that year. He continued to build and improve on it as a graduate student researcher in Berkeley Lab’s Future Technologies Group and later as an engineer in the Lab’s Computer Languages and Systems Software Group, working in collaboration with Lab engineers and scientists, including Paul Hargrove and Katherine Yelick.

Twenty years later, GASNet is thriving with dozens of clients in academia, national laboratories, and industry. It was also recently upgraded to support exascale scientific applications via the Department of Energy’s Pagoda project. This new version, called GASNet-EX, supports scientific applications in drug discovery (NWChemEx), metagenomics research (ExaBiome), COVID-19 infection simulation (SIMCoV), and much more. Major companies also write software that depends on GASNet-EX, such as Hewlett Packard Enterprise’s (HPE’s) Chapel.

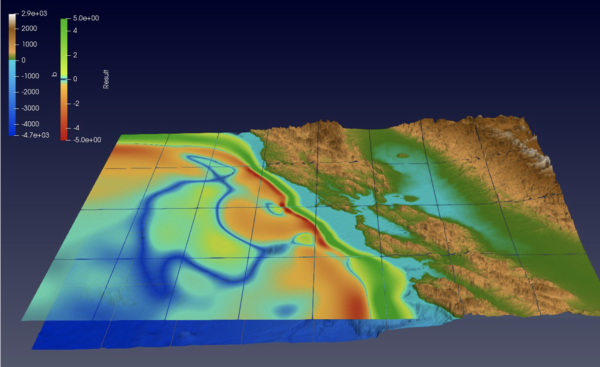

Shallow Water Tsunami Simulation solving shallow water Navier-Stokes equations, written using an Actor library communicating via UPC++ and GASNet-EX.

(Credit: A. Pöppl, M. Bader and S. Baden)

“It can take 20 years or more to develop a high-quality parallel-language compiler, which is a huge effort, and machines change much faster than that. With GASNet, the idea was to isolate the compiler writers from low-level hardware details. We aimed to provide them with a virtual interface to the communication layer so they could target something stable that is hardware- and network-independent, and let GASNet help it work efficiently on various HPC systems,” said Bonachea. “Many application developers don’t even know that GASNet exists. They might know that they’re programming in Chapel, but they don’t know that this cool embedded library handles the communications services.”

“Looking back at what we’ve accomplished with GASNet, I’m proud that we’ve helped so many application developers reach their goals by allowing them to skip designing and implementing a network runtime,” added Hargrove. “Most people who write software take pride in knowing that it’s being used, and we’re no exception. I’m proud that some two dozen projects over the years chose to incorporate GASNet because they were reading through the literature or doing a web search and realized that what we’ve built is useful to them.”

GASNet Going Strong Two Decades Later

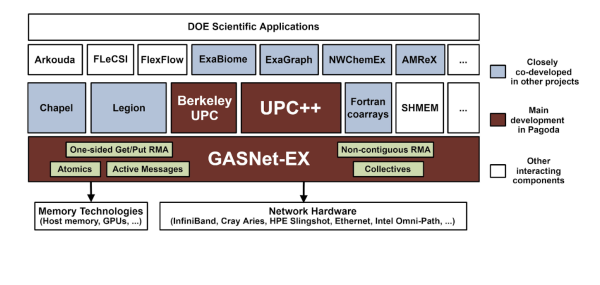

An illustration of the Pagoda software stack.

Both Bonachea and Hargrove credit the success and longevity of GASNet to several factors: A dedicated and agile development team with an unwavering principled mission; their proximity and easy access to resources at the National Energy Research Scientific Computing Center (NERSC) and UC Berkeley; and consistent support of Berkeley Lab Senior Faculty Scientist Katherine Yelick, who was recently appointed UC Berkeley Vice Chancellor of Research, as well as continued funding from the Department of Energy’s Office for Advanced Scientific Computing Research.

Twenty years ago, Bonachea wasn’t the only graduate student that aimed to build a communication library that could take advantage of emerging RMA and AM capabilities. In fact, many of GASNet’s competitors at the time were developed by graduate students for their Master’s or Ph.D. thesis. What helped GASNet gain traction was Bonachea’s relationship with Berkeley Lab via Yelick, his adviser, and UC Berkeley’s and the Lab’s proximity to NERSC. With these connections, Bonachea had a specific set of users to design for and access to NERSC’s supercomputers, which gave him the resources to test his ideas.

“As Berkeley Lab Computer Systems Engineers, it’s our job to develop production-quality software for others. So unlike most graduate students, we think about longevity and maintainability. If an adjustment needs to be made three or five years after a project ends, we need to be able to go back into the software and understand the code we wrote at the beginning,” Hargrove said.

“I certainly didn’t think I’d still be working on GASNet 20 years later; as a graduate student, I was focused on the near-term of about three to five years,” said Bonachea. “But, I knew of at least three different software projects that relied on GASNet at the time, so I took a principled approach to follow good software engineering practices from the very beginning to ensure that I was making something usable and maintainable.”

According to Hargrove, another big part of GASNet’s success is that the right people used and advocated for it at the right time. “GASNet was built to meet the needs of locally based projects like the Berkeley UPC compiler suite, which Yelick led, so it was a foregone conclusion that they’d use it. But when people like Rice University’s John Mellor-Crummey incorporated GASNet into the Coarray Fortran programming model and HPE/Cray’s Bradford Chamberlain incorporated it into the Chapel programming language, it helped build up the reputation and momentum for our work,” he added.

“We began using GASNet because its features were an exact match for those that Chapel needs for inter-node communication: RMA and AM. Over time, the GASNet team at Berkeley proved to be dedicated to creating stable, useful, well-engineered software with terrific user support, so GASNet has only become more of a no-brainer for us to leverage and rely upon over the years,” said Chamberlain, a Distinguished Technologist at HPE/Cray who is the technical lead for the Chapel project.

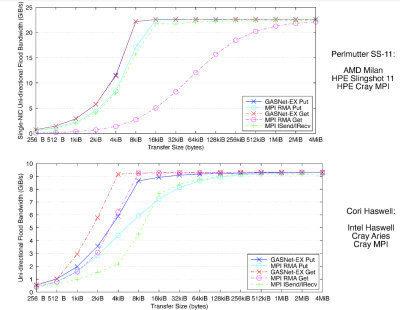

Recently published microbenchmark results demonstrate the RMA performance of GASNet-EX is competitive with several vendor MPI implementations on modern production HPC systems whose networks are representative of emerging exascale systems. Berkeley Lab researchers found that GASNet-EX RMA bandwidth outperformed the equivalent MPI RMA operations by up to 2.7x for Puts and up to 3.1x for Gets at certain transfer sizes, reaching saturation bandwidth at up to 8x smaller transfer sizes.

(Image credit: Dan Bonachea and Paul Hargrove)

Chamberlain added that “while my team develops communication libraries that directly target the unique networks developed by HPE and Cray, we rely heavily on GASNet-EX for portably supporting Chapel on networks developed by other vendors, such as InfiniBand. Moreover, since GASNet-EX also supports HPE/Cray networks, it provides an alternate implementation of Chapel on our systems that we can compare to or that users can opt into when desired.”

“One of the things that I really like about GASNet is that it has stayed true to its original purpose,” said Damian Rouson, who leads Berkeley Lab’s Computer Languages and Systems Software (CLaSS) Group. He also leads the development of the Caffeine parallel runtime library, which uses GASNet-EX and aims to support the parallel Fortran features of modern Fortran compilers with the LLVM Flang compiler as the initial target.

According to Rouson, the fact that Bonachea and Hargrove have continually been part of GASNet’s development team over the years provides the project with a sense of stability and a clear purpose. The relatively small development team has also allowed them to remain agile in adapting and maintaining the tool to meet their client’s needs and challenges presented by evolving HPC architectures.

“From the very start, we decided that GASNet focuses on one-sided RMA and AM; we’ve been very clear-eyed about what we do and what we do well; we drew a box around that and have chosen our battles,” said Bonachea. “We also made backward-compatibility one of our main priorities. If compiler developers decide to invest in calling on GASNet, we’re not going to break that arbitrarily.”

According to Bonachea, the GASNet development team has generated many new features over the years, but their philosophy doesn’t force client runtimes to use them. Although GASNet-EX offers features optimized for exascale computing applications, he notes that code written for GASNet 1.0 two decades ago would still do the right thing on an exascale system.

“The backward compatibility aspect of the design is what I really value most about GASNet: the fact that people don’t have to rewrite their codes with each new version,” said Rouson. “My experience serving on the standard committee for Fortran, which is now Medicare age (65 years old), has impressed upon me the importance of backward compatibility for software sustainability and longevity.”

“With GASNet-EX, our code has passed the 20-year test of time,” said Bonachea. “Over the years, our small team had to make ad-hoc decisions without industry-wide consensus about how things should work in a communications layer because we needed to be adaptable to the needs of our clients. Despite this, we’ve made relatively few design mistakes, and our clients still find our tool useful. That’s something that I’m extremely proud of.”

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube