Since 2017, EQSIM – one of several projects supported by the U.S. Department of Energy’s Exascale Computing Project (ECP) – has been breaking new ground in efforts to understand how seismic activity affects the structural integrity of buildings and infrastructure. While small-scale models and historical observations are helpful, they only scratch the surface of quantifying a geological event as powerful and far-reaching as a major earthquake.

EQSIM bridges this gap by using physics-based supercomputer simulations to predict the ramifications of an earthquake on buildings and infrastructure and create synthetic earthquake records that can provide much larger analytical datasets than historical, single-event records.

To accomplish this, however, has presented a number of challenges, noted EQSIM principal investigator David McCallen, a senior scientist in Lawrence Berkeley National Laboratory’s (Berkeley Lab) Earth and Environmental Sciences Area and director of the Center for Civil Engineering Earthquake Research at the University of Nevada Reno.

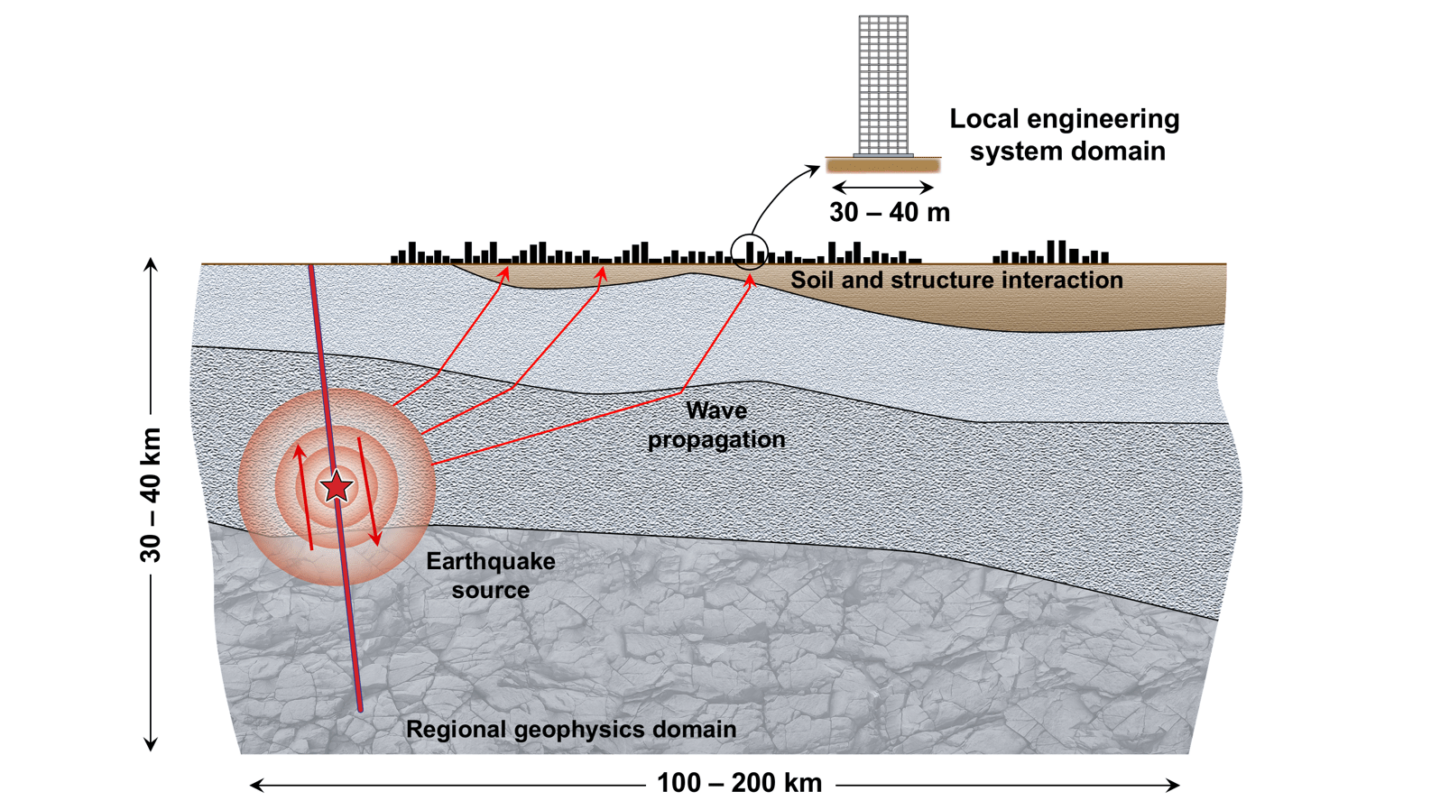

“The prediction of future earthquake motions that will occur at a specific site is a challenging problem because the processes associated with earthquakes and the response of structures is very complicated,” he said. “When the earthquake fault ruptures, it releases energy in a very complex way, and that energy manifests and propagates as seismic waves through the Earth. In addition, the Earth is very heterogeneous and the geology is very complicated. So when those waves arrive at the site or piece of infrastructure you are concerned with, they interact with that infrastructure in a very complicated way.”

Over the last decade-plus, researchers have been applying high-performance computing to model these processes to more accurately predict site-specific motions and better understand what forces a structure is subjected to during a seismic event.

“The challenge is that tremendous computer horsepower is required to do this,” McCallen said. “It‘s hard to simulate ground motions at a frequency content that is relevant to engineered structures. It takes super big models that run very efficiently. So it’s been very challenging computationally, and for some time we didn’t have the computational horsepower to do that and extrapolate to that.”

Fortunately, the emergence of exascale computing has changed the equation.

“The excitement of ECP is that we now have these new computers that can do a billion billion calculations per second with a tremendous volume of memory, and for the first time we are on the threshold of being able to solve, with physics-based models, this very complex problem,” McCallen said. “So our whole goal with EQSIM was to advance the state of computational capabilities so we could model all the way from the fault rupture to the waves propagating through the earth to the waves interacting with the structure – with the idea that ultimately we want to reduce the uncertainty in earthquake ground motions and how a structure is going to respond to earthquakes.”

A Team Effort

Over the last five years, using both the Cori and Perlmutter supercomputers at Berkeley Lab and the Summit system at Oak Ridge National Laboratory, the EQSIM team has focused primarily on modeling earthquake scenarios in the San Francisco Bay Area. These supercomputing resources helped them create a detailed, regional-scale model that includes all of the necessary geophysics modeling features, such as 3D geology, earth surface topography, material attenuation, non-reflecting boundaries, and fault rupture.

“We’ve gone from simulating this model at 2-2.5 Hz at the start of this project to simulating more than 300 billion grid points at 10 Hz, which is a huge computational lift,” McCallen said.

Other notable achievements of this ECP project include:

- Making important advances to the SW4 geophysics code, including how it is coupled to local engineering models of the soil and structure system.

- Developing a schema for handling the huge datasets used in these models. “For a single earthquake we are running 272 TB of data, so you have to have a strategy for storing, visualizing, and exploiting that data,” McCallen said.

- Developing a visualization tool that allows very efficient browsing of this data.

“The development of the computational workflow and how everything fits together is one of our biggest achievements, starting with the initiation of the earthquake fault structure all the way through to the response of the engineered system,” McCallen said. “We are solving one high-level problem but also a whole suite of lower-level challenges to make this work. The ability to envision, implement, and optimize that workflow has been absolutely essential.”

None of this could have happened without the contributions of multiple partners across a spectrum of science, engineering, and mathematics, he emphasized. Earth engineers, seismologists, computer scientists, and applied mathematicians from Berkeley Lab and Livermore Lab formed the multidisciplinary, closely integrated team necessary to address the computational challenges.

“This is an inherently multidisciplinary problem,” McCallen said. “You are starting with the way a fault ruptures and the way waves propagate through the Earth, and that is the domain of a seismologist. Then those waves are arriving at a site where you have a structure that has found a non-soft soil, so it transforms into a geotechnical engineering and structural engineering problem.”

It doesn’t stop there, he added. “You absolutely need this melding of people who have the scientific and engineering domain knowledge, but they are enabled by the applied mathematicians who can develop really fast and efficient algorithms and the computer scientists who know how to program and optimally parallelize and handle all the I/O on these really big problems.”

Looking ahead, the EQSIM team is already involved in another DOE project with an office that deals with energy systems. Their goal is to transition and leverage everything they’ve done through the ECP program to look at earthquake effects on distributed energy systems. This new project involves applying these same capabilities to programs within the DOE Office of Cybersecurity, Energy Security, and Emergency Response, which is concerned with the integrity of energy systems in the U.S. The team is also working to make its large earthquake datasets available as open access to both the research community and practicing engineers.

“That is common practice for historical measured earthquake records, and we want to do that with synthetic earthquake records that give you a lot more data because you have motions everywhere, not just locations where you had an instrument measuring an earthquake,” McCallen said.

Being involved with ECP has been a key boost to this work, he added, enabling EQSIM to push the envelope of computing performance.

“We have extended the ability of doing these direct, high-frequency simulations a tremendous amount,” he said. “We have a plot that shows the increase in performance and capability, and it has gone up orders of magnitude, which is really important because we need to run really big problems really, really fast. So that, coupled with the exascale hardware, has really made a difference. We’re doing things now that we only thought about doing a decade ago, like resolving high-frequency ground motions. It is really an exciting time for those of us who are working on simulating earthquakes.”

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab's Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.