Crystallization in Silico

Figuring out the structures of biological macromolecules

November 1, 2004

When Francis Crick and James Watson deciphered the structure of DNA in 1953, X-ray crystallography became famous; key to their success was crystallography of DNA done by Rosalind Franklin in the laboratory of Maurice Wilkins. X-ray crystallography has long since become the workhorse for structural studies of big biological molecules, including most of the many thousands of proteins whose structures have been solved in the last half century.

Crystallizing biological molecules is tricky, however. Some proteins and other macromolecules can't be crystallized at all; those that can must first be painstakingly purified. And a molecule's shape as part of a crystal, a highly artificial state, may significantly differ from its shape (or shapes) in the warm, aqueous environment of a living cell.

Enter single-particle electron cryomicroscopy (cryo-EM). Bob Glaeser, a member of Berkeley Lab's Life Sciences and Physical Biosciences Divisions and a professor of biochemistry and molecular biology at UC Berkeley, explains that instead of trying to build a crystal in which vast numbers of biological macromolecules assume regular spacing and orientation, there's a different approach.

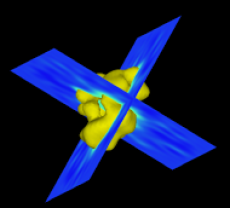

The isosurfaces of the TFIID structure from three different viewing angles. These surface ren- derings are generated by Vis5D. The leftmost view (a) is the top view; (b) is the front view obtained by rotating (a) by 90 degrees around the horizontal axis; and (c) is obtained by rotat- ing (b) by another 90 degrees around the vertical axis

With cryo-EM, Glaeser says, "you can put a microliter of a reasonably pure sample in aqueous solution onto a carbon support film, then plunge it into ethane at liquid-nitrogen temperature" -- which freezes the solution so rapidly that the water in it becomes vitreous, or glassy. The frozen sample is then put under the electron microscope to create two-dimensional images of thousands of randomly oriented "single particles" of the macromolecule.

"To see the structure in 3-D, you have to merge the data from all these individual images, whose orientations are not known," says Glaeser. "However, once these images have been aligned computationally, in a known orientation relative to one another, you have effectively constructed an artificial crystal in the computer" -- what Glaeser calls "crystallization in silico."

There are a couple of catches, he says. "One is that the electron beam can do a lot of damage, and imaging with a safe but weak exposure results in noisy images." The low ratio of signal to noise complicates the process of identifying 2-D images from the micrographs suitable for constructing the 3D model, and of eliminating spurious data during the calculation.

Another, more fundamental catch is the number of calculations required. "About 100,000 particles are enough to pick out an alpha helix," Glaeser says, "but if you want atomic resolution, good enough to resolve a polypeptide chain, you'll need a million particles or more." Glaeser says that to achieve 3angstrom resolution by analyzing a million particles using the most straightforward methods available today would require on the order of 1024 arithmetic operations. "Today's best machines would take 1010 seconds to run the calculation," he says -- almost 20,000 years.

Determined to overcome these limitations of the cryo-EM technique, Glaeser approached members of the Lab's Computational Research Division (CRD) for help in improving mathematical approaches to constructing 3-D images from single particles. Initially this work was supported by Laboratory Directed Research and Development funding, but the project soon attracted the attention of the National Institutes of Health. In 2003 NIH launched an ongoing multidisciplinary, multi-institutional Program Project titled "Technology Development for High Resolution Electron Microscopy." Besides Glaeser, the project's seven directors include two of the early developers of single-particle software, Joachim Frank of the New York State Department of Health's Wadsworth Center in Albany, and Pawel Penczek of the University of Texas's Houston Medical Center.

One project, led by Ravi Malladi of CRD's Mathematics Department, seeks to automate the process of selecting single-particle images in noisy electron micrographs. Current methods require the participation of the human experimenter in interactively choosing up to 10 thousand particle images, the amount needed to achieve 3-D reconstruction at a resolution of 20 angstroms. Since handpicking the million particles needed for atomic resolution would be almost impossible, quick and reliable automatic selection methods are essential for making progress.

Another major effort lies in developing new computational approaches and improving algorithms to determine the orientation of the 2-D images and continually refine the construction of the 3-D model from these. Esmond Ng, head of the Scientific Computing Group in CRD, enlisted group member Chao Yang to help meet the challenge. They early on set out to learn the basics of single-particle cryo-EM through collaboration with co-PI Penczek, Ken Downing of the Lab's Life Sciences Division, and Eva Nogales of the Life Sciences and Physical Biosciences Divisions, an associate professor of molecular and cell biology at UC Berkeley.

Says Ng, "Once we understood the problem and the issues the microscopists were facing in reconstructing 3-D models from a selection of randomly oriented 2-D projections, we sought new ways of formulating the problem mathematically. We realized there were computational tools for tackling some formulations already in existence. The tools aren't new, but structural biologists don't know about them or haven't used them. So both parties had something to offer the other; that's the beauty of this collaboration."

Yang characterizes one approach as "top down -- describing the general problem and looking for the best numerical solution, the best algorithm. The experimentalists come from the bottom up, coping with specifics. Now we are converging, working toward a robust algorithm that can handle peculiar problems, like noisy data in cryo-EM."

In an article published in the Journal of Structural Biology in November 2004, Yang, Ng, and Penczek describe an algorithm for simultaneously refining the 3-D model while tightening the parameters for the orientation of the individual 2-D projections used to reconstruct the model. The method is faster, more efficient, and more accurate than any of its predecessors.

Because they are projections, the electron-microscope's many images of identical proteins -- quick-frozen from solution, on carbon film -- look different from one another, just as shadows of identical pasta tubes on a flat piece of paper would show two concentric circles seen along the axis, concentric ellipses seen at an angle, and a dark band seen from the side. Without knowing the exact orientations of these views, however, one might not be able to tell if the pasta tubes were cut straight across like macaroni or slanted like cannelloni.

In the case of proteins -- with shapes generally more complex than pasta! -- the first task is to select enough good-quality images. Once enough projections have been chosen, they can be grouped according to their apparent orientations on the carbon film and averaged, in order to improve the signal-to-noise ratio. From these groups, preliminary 3-D models are constructed.

The first model is only an educated guess of the real final shape. This model becomes increasingly more accurate, however, as the orientations of the selected particles are continually corrected and the model refined.

Usually the refinement of orientations and the subsequent refinement of the model itself are done as separate steps in "real space." In a leading method known as projection matching, developed by Penczek, the individual particles are reoriented in a way that corresponds best to the current model; then the model is refined to better fit the sum of the projections, and the process is repeated until no further improvement is possible. With this method, unfortunately, mistakes introduced into the model at any stage are likely to persist.

Alternately, the data can be mathematically transformed so that particle orientations are corrected all together, with the advantage that the final 3-D model need be calculated only once. But in these approaches the model calculation isn't based on corrections made in real space, and the mathematical transformations themselves introduce uncertainties and possible errors.

Yang, Ng, and Penczek's new method simultaneously optimizes particle orientation and model refinement in real space. Unlike projection matching, it does not correct orientations by comparison with the model.

Says Yang, "If you know you don't have an optimum 3-D structure, do you really want to try all that hard to match it? Instead, our approach uses derivative information to search for the minimum difference between particle orientations and various model configurations in a cubic grid. All you need is a search direction; you compute on the fly."

Technically, the problem is formulated as an optimization problem and solved using the limited-memory Broyden-Fletcher-Goldfarb-Shannon (BFGS) algorithm; the mathematically inclined will find details in the authors' Journal of Structural Biology paper, referred to below. It's a computational method that has been applied in many scientific fields; its application to single-particle cryo-EM, using the supercomputers at the Department of Energy's National Energy Research Scientific Computing Center (NERSC), is a significant step forward, offering a rapid, robust way -- one in which the very nature of the calculation tends to eliminate noise and bad data -- of achieving dependable structures at medium resolution.

In the quest to achieve atomic-resolution structures of large biological molecules in solution, many challenges remain. They include finding the best software designs for different computer architectures; finding ways to handle the data from million-particle collections with fewer operations and faster calculations; and -- from the standpoint of the biologist, one of the most desirable goals of all -- the ability to study the same protein in different conformational states. The last is a goal that crystallography renders unattainable by its very process, but one that "crystallization in silico" brings closer to realization.

Additional information "Unified 3-D structure and projection orientation refinement using Quasi-Newtonian algorithm," by Chao Yang, Esmond G. Ng, and Pawel A. Penczek, appears in the 6 November 2004 issue of The Journal of Structural Biology.

Paul Preuss, LBNL Public Affairs

About Computing Sciences at Berkeley Lab

High performance computing plays a critical role in scientific discovery. Researchers increasingly rely on advances in computer science, mathematics, computational science, data science, and large-scale computing and networking to increase our understanding of ourselves, our planet, and our universe. Berkeley Lab’s Computing Sciences Area researches, develops, and deploys new foundations, tools, and technologies to meet these needs and to advance research across a broad range of scientific disciplines.

Instagram

Instagram YouTube

YouTube